How Do You Build Generative AI Applications on AWS?

An Accenture survey indicates that 97% of C-suite executives expect generative AI to be transformative. And rightly so. Generative AI holds the potential to revolutionize business processes with increased automation and enhanced analytics capabilities.

However, companies often face integration and development challenges as generative AI applications require complex and resource-intensive infrastructure. AWS equips enterprises with the tools and services needed to develop in-house generative AI capabilities.

For instance, Accenture infused its applications with AI by leveraging Amazon CodeWhisperer and saw an impressive 30% jump in software development productivity. The company plans to empower 50,000 more developers to work with AWS generative AI capabilities.

Accenture is only one name among many other giants who have benefitted from AWS’ gen AI tools and solutions, but can your organization also capitalize on AWS offerings? This article provides hands-on guidance on building generative AI apps with AWS services. It will cover the foundations of gen AI application development, the steps to build these applications, and how various AWS services can be used to make the process more efficient.

Generative AI landscape in 2024

Generative AI has seen major evolutions – from initial hype to widespread strategic adoption driven by recent LLM innovations and multimodal models that enhance enterprise value. More autonomous agents and AI-based wearables are further transforming capabilities.

Revolutionary launches like Rabbit Inc’s Large Action Model (LAM), which are based on prompting actions rather than just text or visuals, are pushing boundaries. But LAM isn’t the only advancement.

Multimodal and custom FMs

Multi-modal generative AI models are gaining traction for their ability to ingest diverse inputs and generate corresponding outputs spanning text, images, code, and more. Unlike single-modality models, multi-modal ones can connect cross-domain datasets and tasks.

For example, GPT-4 Vision (GPT-4V) is an extension of GPT-4 that includes multimodal capabilities, allowing the model to process and understand text and images concurrently. However, multi-modal models are not restricted to image and text generation. The latest update from Open Interpreter provides multi-modal capabilities for code generation.

Control your computer graphically using any multimodal language model.

— Patrick Ranger (@AiBuzzNews) January 11, 2024

Open Interpreter's "The New Computer Update" is revolutionizing the way language models interact with computers.

Open Interpreter makes it more accessible and versatile than ever! pic.twitter.com/XNiqJAWxFY

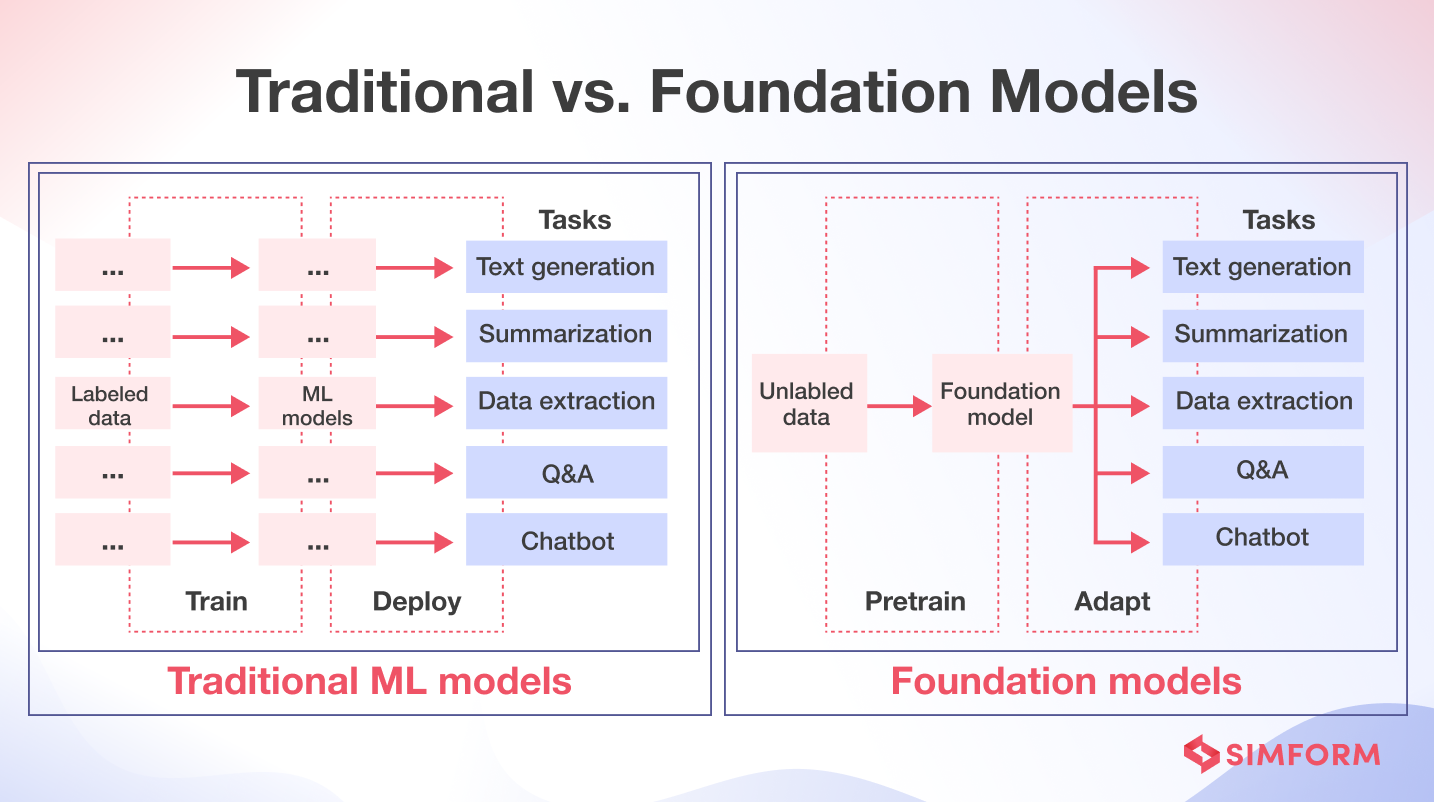

Another key trend in generative AI models is customized foundation models. These models leverage pretrained AI models to generate data based on specific labels or patterns.

Source: AWS Startups

You can leverage existing foundation models by fine-tuning them based on specific business needs and training on custom data to create custom FMs.

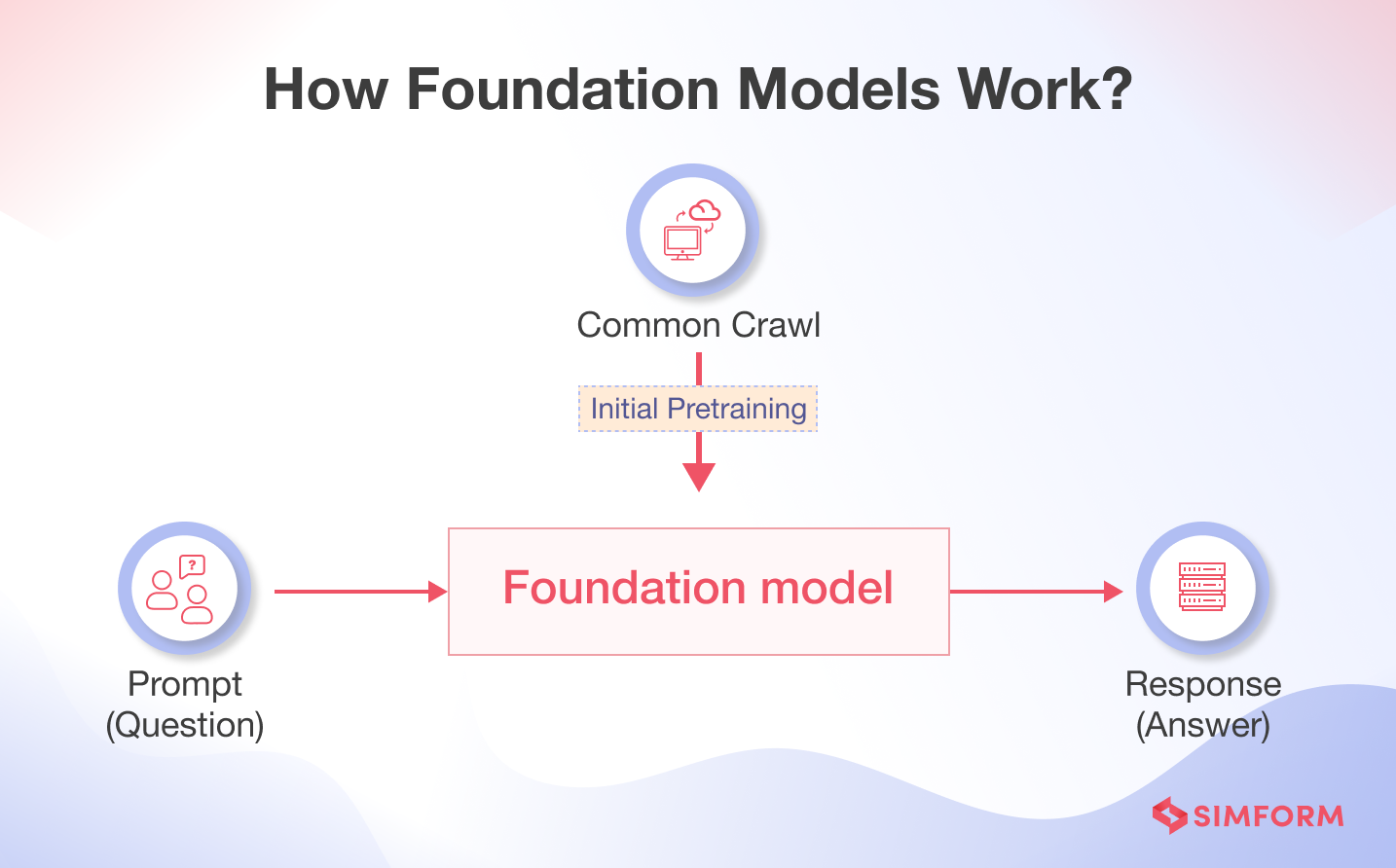

Here is how foundation models work

FMs learn patterns and relationships from labeled data to predict the next item or result. Take an example of a text-generating FM. It understands the prompt text and predicts the next word, phrase, or answer the user seeks.

Similarly, for text-to-image or image-to-image generation, FMs work with a seed vector derived from “prompt,” which describes the task the model needs to perform. Quality and detail of FMs are crucial for better output.

Developing generative AI applications with custom FMs, multi-modal models, or even LAM will require extensive resources. These include cloud infrastructure to enable scalability and higher availability.

Role of cloud services in building GenAI apps

Generative AI models require large datasets to train and improve output. For example,

- Bidirectional Encoder Representations from Transformers (BERT) is trained with 3.3 billion tokens and 340 million parameters.

- GPT-3 has a 96-layer neural network and 175 billion parameters and is trained with 500 billion words from the Common Crawl dataset.

Training your AI models with more than 170 billion parameters and 500 billion text needs extensive resources – high computing power, CPUs, and GPUs. Cloud computing services can offer businesses the capabilities to build AI applications through scalable and robust infrastructure.

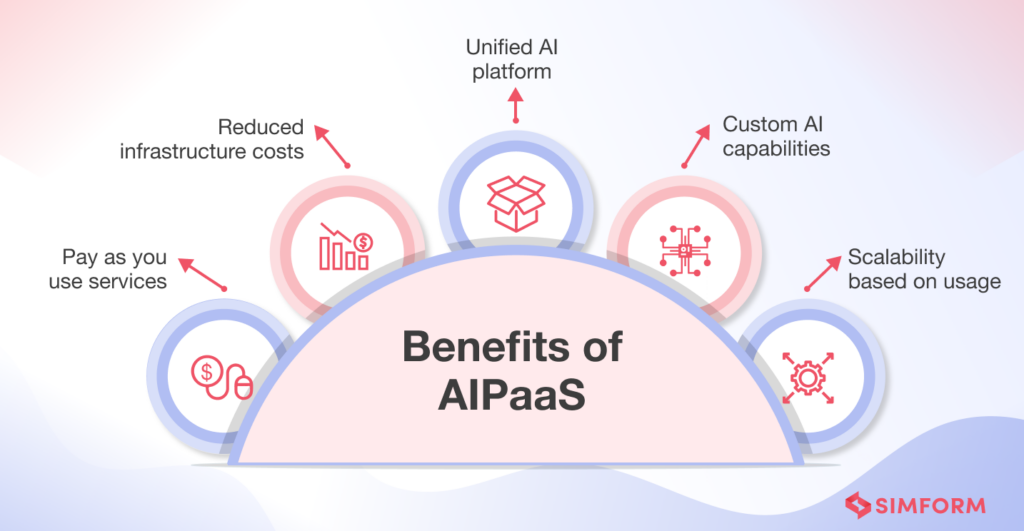

Most businesses struggle with cloud service management while building AI apps significantly to optimize costs. This is where organizations can leverage Artificial Intelligence Platforms as a Service (AIPaaS). These platforms help businesses create, train, and deploy AI-based applications by combining AI and PaaS to power cloud platforms and provide automated solutions.

Why AWS is the best cloud platform for generative AI development

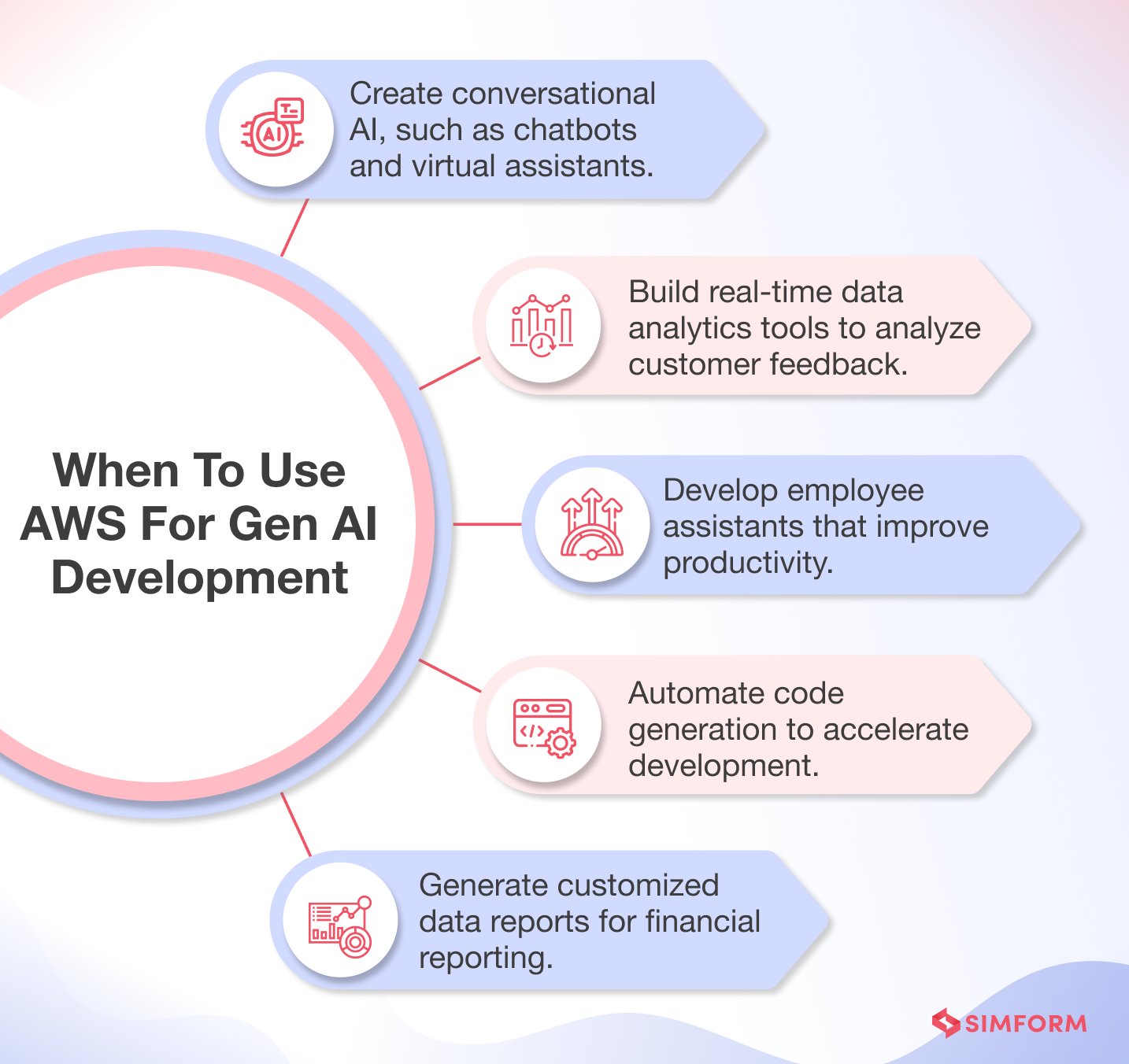

AWS offers a range of generative AI applications that you can customize by leveraging specific data, use cases, and targeted customers. For example, AWS offers generative AI capabilities to build applications for visual inspection, synthetic data generation, animations, and video and image generation.

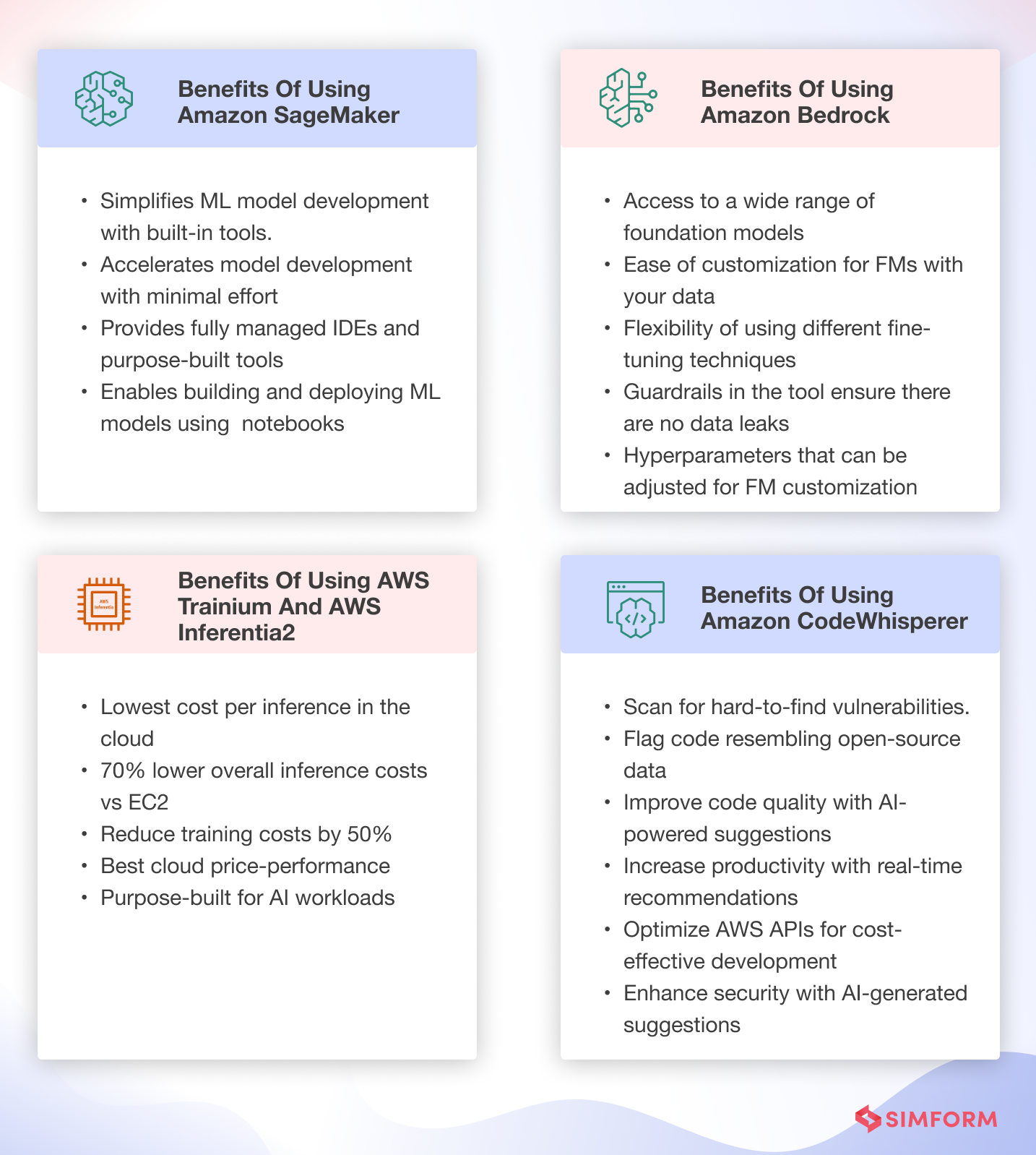

Key benefits of using AWS for GenAI application development are:

1. Easiest place to build

- Provides the broadest choice of secure, customizable Feature Models

Easy model customization with Amazon Bedrock using just a few labeled examples - Keeps data encrypted and confidential within virtual private cloud

2. Most price-performant infrastructure

- Infrastructure specifically designed for high performance machine learning

- Achieve best price-performance for generative AI with Graviton

3. Enterprise-grade security and privacy

- Pre-built services like IAM, KMS, and WAF for enhanced data protection

4. Fast experimentation

- Services like SageMaker Notebook allow quick prototyping and testing without complex setup

5. Pre-trained models

- Services like Amazon Kendra and Polly provide high-quality pre-trained models

- Models can be customized for specific use cases

6. Integration with AWS services

- Easy integration with other AWS offerings like databases and analytics

7. Responsible AI

- Models built with responsible AI in mind at each stage

These benefits look attractive, but you need an effective strategy to develop a high-efficiency generative AI application. The next section will walk you through the steps and tips for building GenAI applications using various AWS services.

How to develop a generative AI app on AWS: A step-by-step process

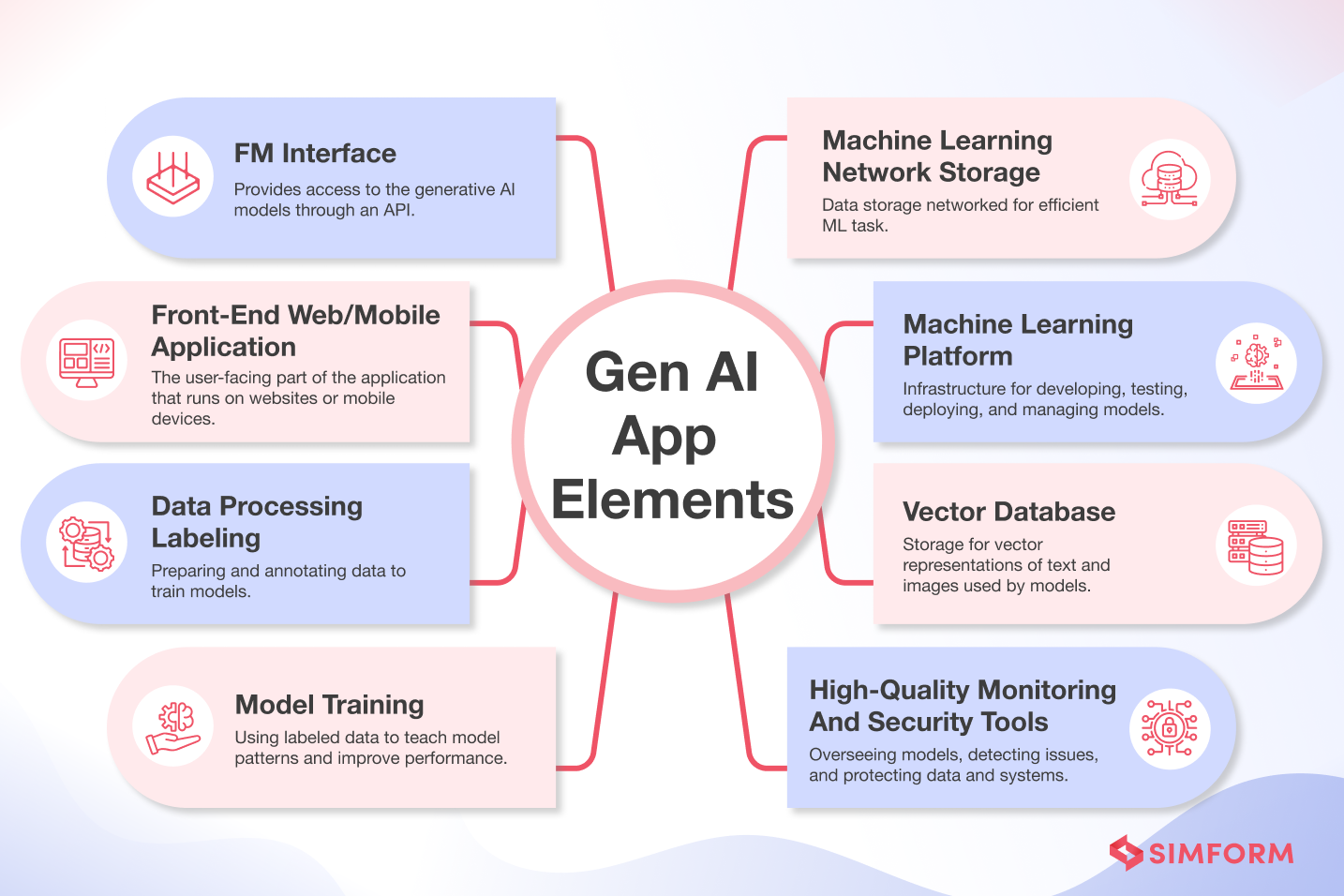

The generative AI development process requires:

- Foundation model interface – Provides access to the generative AI models through an API

- Front-end web/mobile application – The user-facing part of the application that runs on websites or mobile devices

- Data processing labeling – Preparing and annotating data to train models

- Model training – Using labeled data to teach model patterns and improve performance

- High-quality monitoring and security tools – Overseeing models, detecting issues, and protecting data and systems

- Vector database – Storage for vector representations of text and images used by models

- Machine learning platform – Infrastructure for developing, testing, deploying, and managing models

- Machine learning network storage – Data storage is networked for efficient ML tasks

- AI model training resource – Computing power like GPUs for model training

- Text-embeddings for vector representation – Representing text as vectors in numerical form for models

Now that you know the prerequisites, it is time to understand the steps required to develop a generative AI application with AWS.

Step 1: Choose your approach

There are two popular approaches to building generative AI applications. One is to create the algorithm from scratch. Another is to use a pre-trained model and fine-tune it for your specific project.

Approach 1: Create an AI model from scratch

AWS offers Amazon SageMaker, a managed service that simplifies the deployment of generative AI models. It provides tools for building, training, and deploying machine learning models, including the ability to create generative AI models from scratch.

Approach 2: Fine-tune existing FMs

If you want to use an existing FM and fine-tune it for your generative AI application, Amazon Bedrock is the best option. It allows you to fine-tune FMs for specific tasks without the need for annotation of BigData.

Once you choose the approach you want to use for creating a generative AI application, the next step is to plan for data that will help train the algorithms.

How Gen AI on AWS Helps Drive Business Innovation

Step 2: Prepare your data

Preparing the data for model training involves collection, cleansing, analysis, and processing.

Here is how you can prepare data for generative AI development on AWS:

#1. Data collection

Gather a large, relevant dataset representing the desired model output. For example, to train a model to detect pneumonia from lung images, collect diverse training images of both healthy lungs and lungs with pneumonia.

So, based on the use case, you need to identify the sources and types of data to collect. Tools like Amazon Macie can help classify, label, and secure the training data.

#2. Data preprocessing

Clean and format the raw data to prepare it for modeling. This includes removing noise/errors, handling missing values, encoding categorical variables, and scaling/normalizing numeric variables.

You can use Amazon EMR with Apache Spark and Apache Hadoop to process and analyze large amounts of information.

#3. Exploratory data analysis

Understand the data distribution, identify outliers/anomalies, check for imbalances, and determine appropriate preprocessing steps. You can streamline data analytics by building an ML pipeline with Amazon SageMaker Data Wrangler.

#4. Feature engineering

Derive new input features or transform existing ones to make the data more meaningful/helpful in learning complex patterns. For image/text data, this involves extracting relevant features. You can use Amazon SageMaker Data Wrangler to simplify the feature engineering process, leveraging a centralized visual interface.

Amazon SageMaker Data Wrangler contains over 300 built-in data transformations that help you normalize, transform, and combine features without coding.

#5. Train split

Randomly divide the preprocessed data into training and test sets for building/evaluating the model.

- Model training: Feed the training set into the generative model and iteratively update its parameters until it learns the underlying patterns in the data. You can use AWS Step Functions Data Science SDK for Amazon SageMaker to automate the training of a machine learning model.

- Evaluation: Use offline or online evaluation to determine the performance and success metrics. Verify the correctness of holdout data annotations. Fine-tune the data or algorithm based on the evaluation results and apply data cleansing, preparation, and feature engineering.

Step 3: Select your tools and services

There are five main AWS services to consider for generative AI app development:

Amazon Bedrock

Amazon Bedrock is a fully managed service that provides cutting-edge foundational models (FMs) for language and text tasks, including multilingual and text-to-image capabilities. It helps you fine-tune foundation models from AI companies like AI21 Labs, Anthropic, Stability AI, and Amazon, which are accessible through integration with custom APIs.

If you decide to choose Amazon Bedrock, here are some tips for choosing the right FM:

- Clearly define the specific task or problem you want your generative AI model to solve.

- Assess the capabilities of different foundation models and determine which ones suit your use case.

- Consider the trade-off between model size and performance based on your specific needs.

- Look for foundation models that have been evaluated and benchmarked for performance on relevant tasks.

- Evaluate the training data used for each model and consider whether it aligns with your specific domain or industry.

- Consider the level of support provided for each foundation model, including documentation, community support, and availability of updates.

AWS Inferentia and AWS Trainium

AWS Inferentia and AWS Trainium are custom machine learning accelerators designed by AWS to improve performance and reduce costs for machine learning workloads.

AWS Inferentia is optimized for cost-effective, high-performance inferencing. It allows you to deploy machine learning models that provide quick, accurate predictions at low cost. Inferentia is well-suited for production deployments of models.

AWS Trainium is purpose-built for efficient training of machine learning models. It is designed to speed up the time it takes to train models with billions of parameters, like large language models. Trainium enables faster innovation in AI by reducing training costs.

Amazon Code Whisperer

Amazon CodeWhisperer, an AI coding companion, is trained on billions of lines of Amazon and open-source code. It helps developers write secure code faster by generating suggestions, including whole lines or functions, within their IDE. CodeWhisperer uses natural language comments and surrounding code for real-time, relevant suggestions.

Amazon SageMaker

Amazon SageMaker provides a great solution to maintain complete control over infrastructure and the deployment of foundation models. SageMaker JumpStart is the machine learning hub of Amazon SageMaker that assists customers in discovering built-in content to develop their next machine learning models. The SageMaker JumpStart content library includes hundreds of built-in content algorithms, pretrained models, and solution templates.

| Use Case | Recommended Service | Key Reasons |

| Content generation from scratch with full control and customization | Amazon SageMaker + AWS Trainium | – Build and iterate custom models using notebooks – BYO hardware so can optimize cost – Scale training using AWS Trainium |

| Customized text generation using existing foundation models | Amazon Bedrock | – Fine-tune foundation models on your data – Easy integration via API – Lower cost than training custom text models |

| Generate 100k+ images/day for consumer app | SageMaker + AWS Trainium | -Train custom image generation models at scale – Leverage Trainium’s hardware acceleration for faster training |

| Deploy existing text generation model to production | Amazon Bedrock + AWS Inferentia | – Low cost inference at scale – Inferentia optimization boosts throughput over 200x for some models |

Step 4: Train or fine-tune your model

The next step is to either train your model or fine-tune it. Data quality is crucial in both scenarios, so you must ensure comprehensive data collection and analysis. Now, if you are using a foundation model and trying to fine-tune it for your business, there are three types of fine-tuning methods that you can use.

-

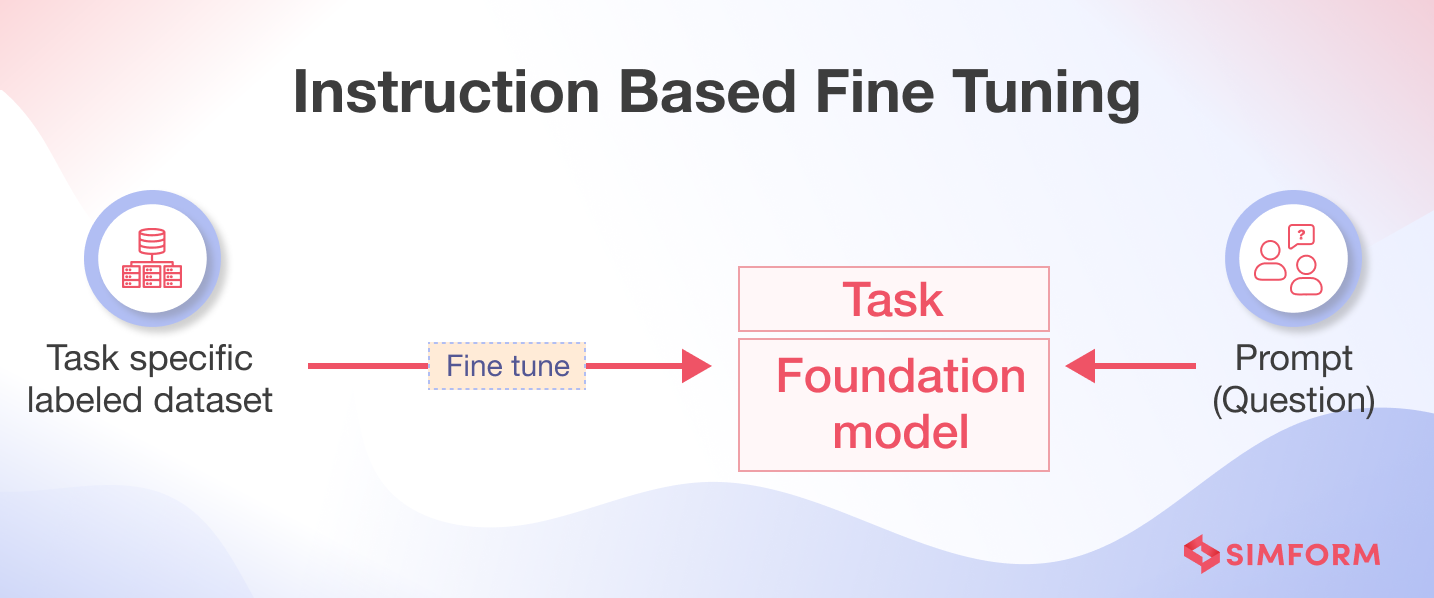

Instruction-based fine-tuning

Customizing the FM with instruction-based fine-tuning involves training the AI model to complete specific tasks based on task-specific data labels. This customization approach benefits startups looking to create custom FMs with limited datasets.

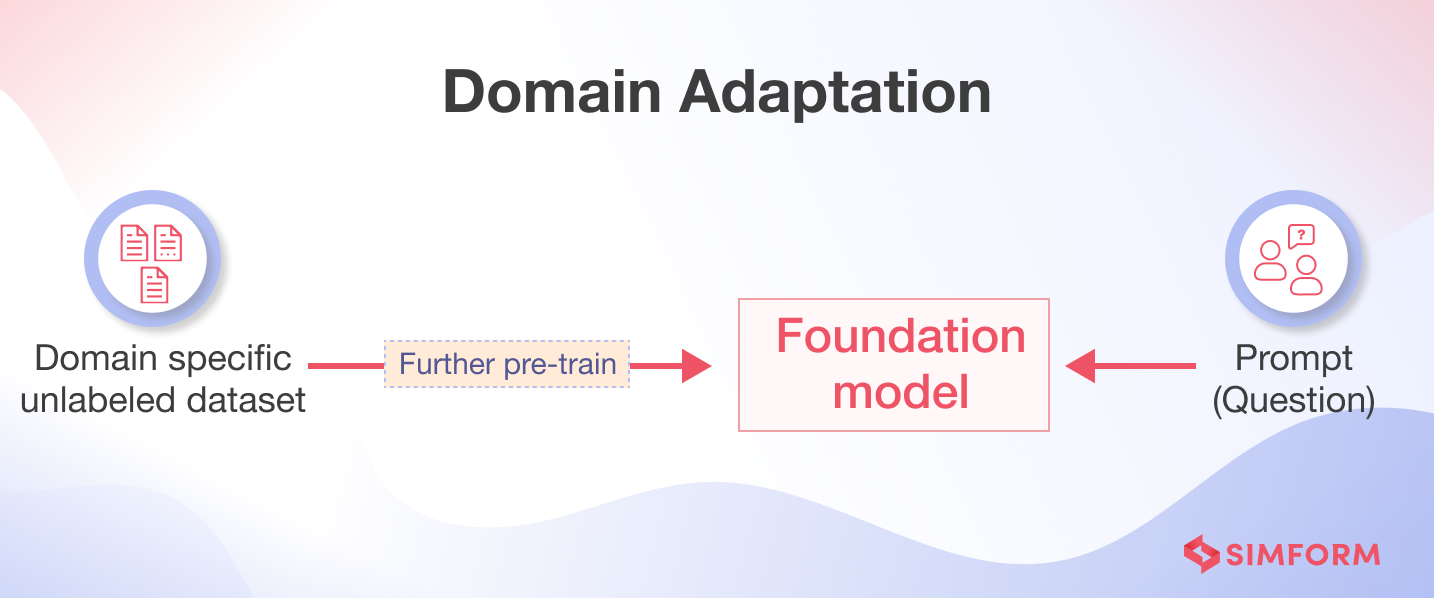

2. Domain adaptation

Using the domain adaptation approach, you can train the foundation model with a large domain-specific dataset. If you build a generative AI application based on proprietary data, you can use the domain adaptation approach to customize the FMs. For example, healthcare startups and IVF labs can leverage the domain adaptation approach.

3. Retrieval-Augmented Generation(RAG)

RAG is an approach where FMs are augmented with an information retrieval system based on highly dense vector representations. Text embedding of proprietary data enables the generation of a vector representation of a large amount of data. The context of the prompt is based on what a user enquires about and defines what a prompt represents.

However, if you want to build an AI model from scratch, you can use Amazon SageMaker to train the model. Here is a brief overview of the process:

- Prepare the data: Use your Amazon SageMaker Notebook to preprocess the data you need to train.

- Create a training job: In the SageMaker console, create a training job that includes the URL of the Amazon S3 bucket where you’ve stored the training data, the compute resources for model training, and the URL of the S3 bucket where you want to store the output of the job.

- Train the model: Once the training job is set up, you can train the machine learning model using SageMaker’s built-in algorithms or by bringing your training script with a model built with popular machine learning frameworks.

- Evaluate model performance: After training, evaluate your machine learning model’s performance to ensure the target accuracy for your application.

Step 5: Build your application

You can leverage various AWS services to deploy your trained generative AI model into a complete application.

First, package and serialize your model and any dependencies and upload it to an S3 bucket. This allows for durable storage and easy model versioning.

Next, create a Lambda function that downloads the model file from S3, loads it into memory, preprocesses inputs, runs inferences, and returns output. Configure appropriate timeouts, memory allocation, concurrency limits, logging, and monitoring to ensure scalable and reliable performance.

Expose the Lambda function through API Gateway, which provides a scalable proxy and request-handling logic. Secure access to your API using IAM roles, usage plans, API keys, etc.

You have multiple hosting options for the client-facing UI – a static web app deployed on S3, CloudFront, and Amplify, or a dynamic one on EC2, Elastic Beanstalk, etc. Integrate UI requests with the backend API.

Alternatively, for containerized apps, deploy on ECS or AWS Fargate. For GraphQL APIs, use AWS AppSync with built-in filtering, caching, and data management capabilities.

Step 6: Test and deploy your application

Conducting thorough testing is crucial before deploying your application on AWS for production. This includes testing for functionality through the unit, integration, and end-to-end tests. Further evaluating performance under load, stress, and scalability scenarios is also crucial for your generative AI applications.

Analyze potential biases in the data, model fairness, and outcome impacts to ensure ethical AI deployment. This includes vulnerability scanning, penetration testing, and compliance checks for enhanced data security.

Once testing is complete, deploy the application on AWS using infrastructure as code, automated deployment, A/B testing, and canary deployment. For example, you can use AWS Neuron, the SDK that helps deploy models on Inferentia accelerators, integrating with ML frameworks like PyTorch and TensorFlow.

Lastly, implement auto-scaling and fault-tolerant architectures to ensure reliability and scalability in production.

With the fundamentals of using AWS for generative AI covered, let’s now see some practical implementations. The next section will share examples of companies successfully leveraging AWS’s generative capabilities to solve business problems and drive innovation.

AWS generative AI in action

Let’s explore a few examples of successful GenAI app development by companies worldwide.

Booking.com builds generative AI-based CX using AWS

Booking.com wanted to create a generative AI application that provides customer recommendations on travel bookings. It uses AWS services like Amazon SageMaker to build, train, and deploy its ML models for the generative AI application.

Further, Booking.com uses Amazon Bedrock to scale generative AI capabilities, leveraging natural language models to offer enhanced recommendations. According to Thomas Davey, VP of BigData and Machine Learning at Booking.com, “ With Amazon Bedrock, We can pick the right language models and fine-tune them with Booking.com data to deliver destination and accommodation recommendations that are tailored and relevant. Plus, we never have the risk of exposing our data—it stays safe within our secure ecosystem.”

Nearmap uses Amazon SageMaker to scale ML for Computer Vision Analytics

Nearmap offers organizations a dynamic lens for tracking structural and environmental changes. It has over 50 PB of visual data and needs a scalable solution that takes less time in AI model training.

To overcome the challenges of slower AI model training and the need for accuracy, Nearmap used Amazon SageMaker. It helped Nearmap team to build, train and deploy ML models faster. Earlier Nearmap’s AI model training required local data syncing to multiple GPUs, which took longer. However, with Amazon SageMaker, the company managed to improve time to market.

Nearmap trains AI models using the data stored on Amazon S3, leveraging Amazon SageMaker’s capabilities.

Instabase built an AI hub for document digitizations with AWS

Instabase Inc. built its AI Hub SaaS solution on AWS to provide customers an easy way to apply generative AI to process documents without needing to train machine learning models. By using AWS services like Amazon EC2 and Amazon EKS, they created a highly reliable platform to run large language models that can digitize, encode, and interpret documents uploaded by customers.

The move to AWS allowed Instabase to reduce compute costs by 30% while benefiting from automated performance improvements. Additionally, leveraging AWS’s inherent security and compliance capabilities enabled Instabase to achieve SOC 2 compliance rapidly. With the speed, agility, and innovation advantages provided by building on AWS, Instabase was able to go from concept to launching AI Hub in just 3 months.

The company can now pivot faster to support new technologies and improvements thanks to AWS’s flexible and fully managed infrastructure.

Jumio leverages Amazon SageMaker and Rekognition to build IDV

Jumio built over 50 machine learning models on Amazon SageMaker to validate the authenticity of government-issued IDs submitted by users. By leveraging Amazon SageMaker’s fully managed infrastructure and tools, Jumio efficiently experiments with different model architectures to detect increasingly sophisticated document tampering and forgery attempts.

The company also uses Amazon Rekognition’s face verification capabilities to match users’ selfies to their ID photos, ensuring accurate user authentication across age differences up to 10 years.

By going all in on AWS, Jumio can scale its identity verification globally, including meeting data privacy regulations across 200 countries and territories. The company offers flexible IDV solutions on AWS to balance security, conversion rate, and user experience based on each customer’s specific use case.

How does Simform enable generative AI development on AWS?

Simform is an AWS Premier Consulting Partner. Our team of 200+ certified professionals leverage best practices to deliver generative AI capabilities for your applications. Serving clients across healthcare, supply chain, marketing, and SaaS domains, Simform has been delivering AI capabilities with robust architecture.

Simform’s expertise was evident when one of the clients wanted to create an AI platform for healthcare companies to manage requests and payments. Accurately extracting data from unstructured formats like medical records, doctor’s notes, and patient correspondence was challenging.

Simform used AWS Textract for data extraction and AWS ECS Fargate for secure processing to solve these challenges. We used AWS WAF for system security and various AWS services such as Amplify, S3, DynamoDB, and Lambda for secure application development, storage, and processing.

Our hybrid approach:

- Reduced extraction costs by 35%

- Improved accuracy by 25% and processing time by 40%

- Cut data breaches by 95% and ensured HIPAA compliance

Creating generative applications with AWS tools does offer benefits, but choosing the right set of services requires experience and expertise. If you are looking to build a generative AI app with AWS and are stuck on which AWS services to use, Simform can help you. Contact us now to discuss your requirements and challenges with our AWS consultants.