In 2026, “AI agents” no longer mean chatbots that answer questions. In most enterprises, agents are being used to execute tasks across tools. They triage tickets, resolve routine requests, enrich CRM records, generate first draft analyses, and coordinate workflows across systems.

The challenge is not whether agents can respond. The challenge is whether they can act reliably. They must follow constraints, use the right data, avoid risky actions, and stay observable when something goes wrong.

But not all AI agents are alike!

Some are simple, some complex, some proactive, and some utility-oriented. Some learning-oriented, some fixed.

In this blog, we will explore the different types of AI agents and their possible applications in various sectors.

Types of AI Agents

Agents in Artificial Intelligence can be categorized into different types based on how agent’s actions affect their perceived intelligence and capabilities, such as:

- Simple reflex agents

- Model-based agents

- Goal-based agents

- Utility-based agents

- Learning agents

- Hierarchical agents

By understanding the characteristics of each type of agent, it is possible to improve their performance and generate better actions. Let’s have a detailed overview of the types of AI agent.

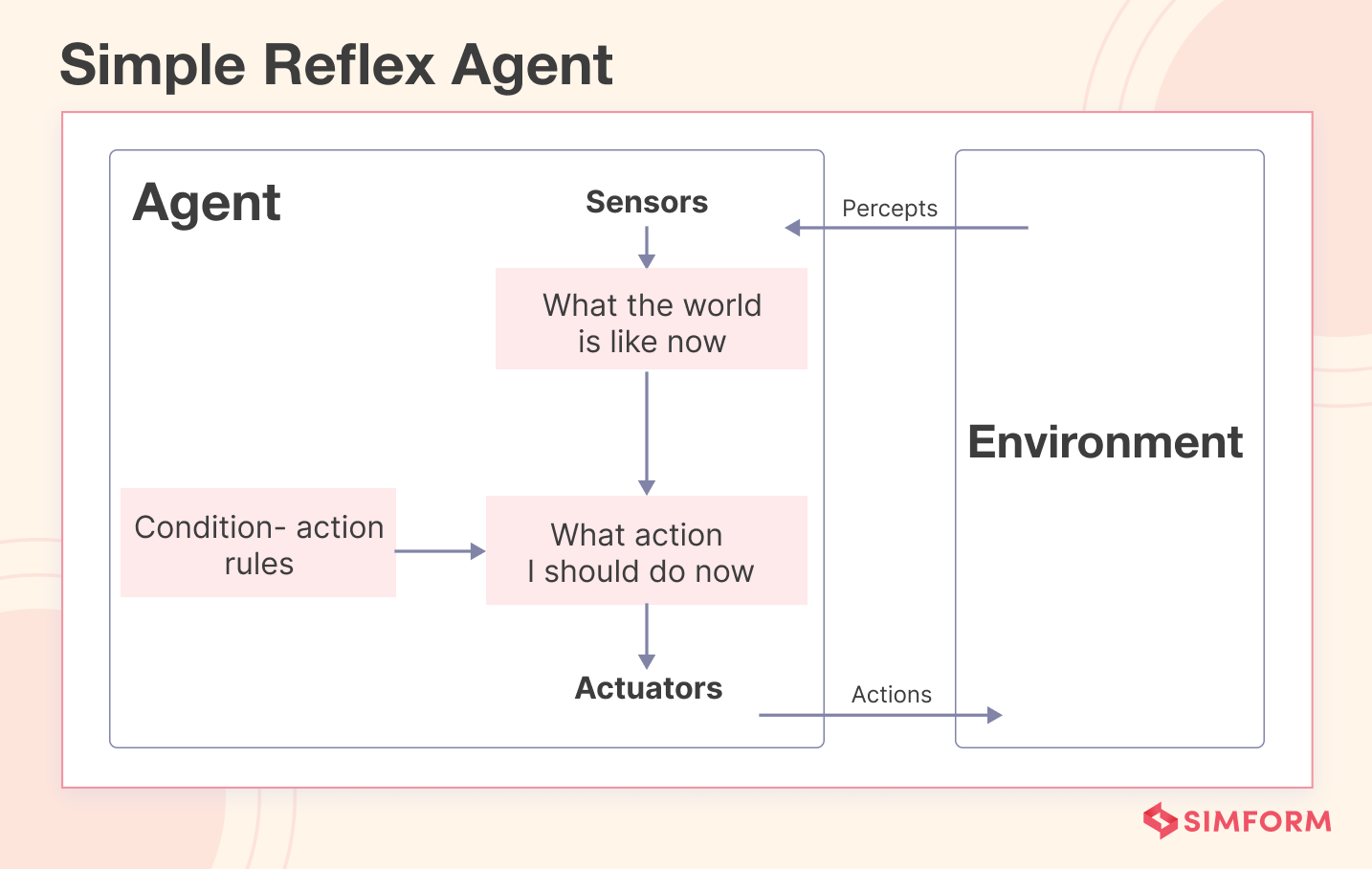

1. Simple Reflex Agent

A simple reflex agent is an AI system that follows pre-defined rules to make decisions. It only responds to the current situation without considering the past or future ramifications.

A simple reflex agent is suitable for environments with stable rules and straightforward actions, as its behavior is purely reactive and responsive to immediate environmental changes.

How does it work?

A simple reflex agent executes its functions by following the condition-action rule, which specifies what action to take in a certain condition.

Example

A rule-based system developed to support automated customer support interactions. The system can automatically generate a predefined response containing instructions on resetting the password if a customer’s message contains keywords indicating a password reset.

Advantages of simple reflex agents

- Easy to design and implement, requiring minimal computational resources

- Real-time responses to environmental changes

- Highly reliable in situations where the sensors providing input are accurate, and the rules are well designed

- No need for extensive training or sophisticated hardware

Limitations of simple reflex agents

Here are the limitations of the simple reflex agent:

- Prone to errors if the input sensors are faulty or the rules are poorly designed

- Have no memory or state, which limits their range of applicability

- Unable to handle partial observability or changes in the environment they have not been explicitly programmed for

- Limited to a specific set of actions and cannot adapt to new situations

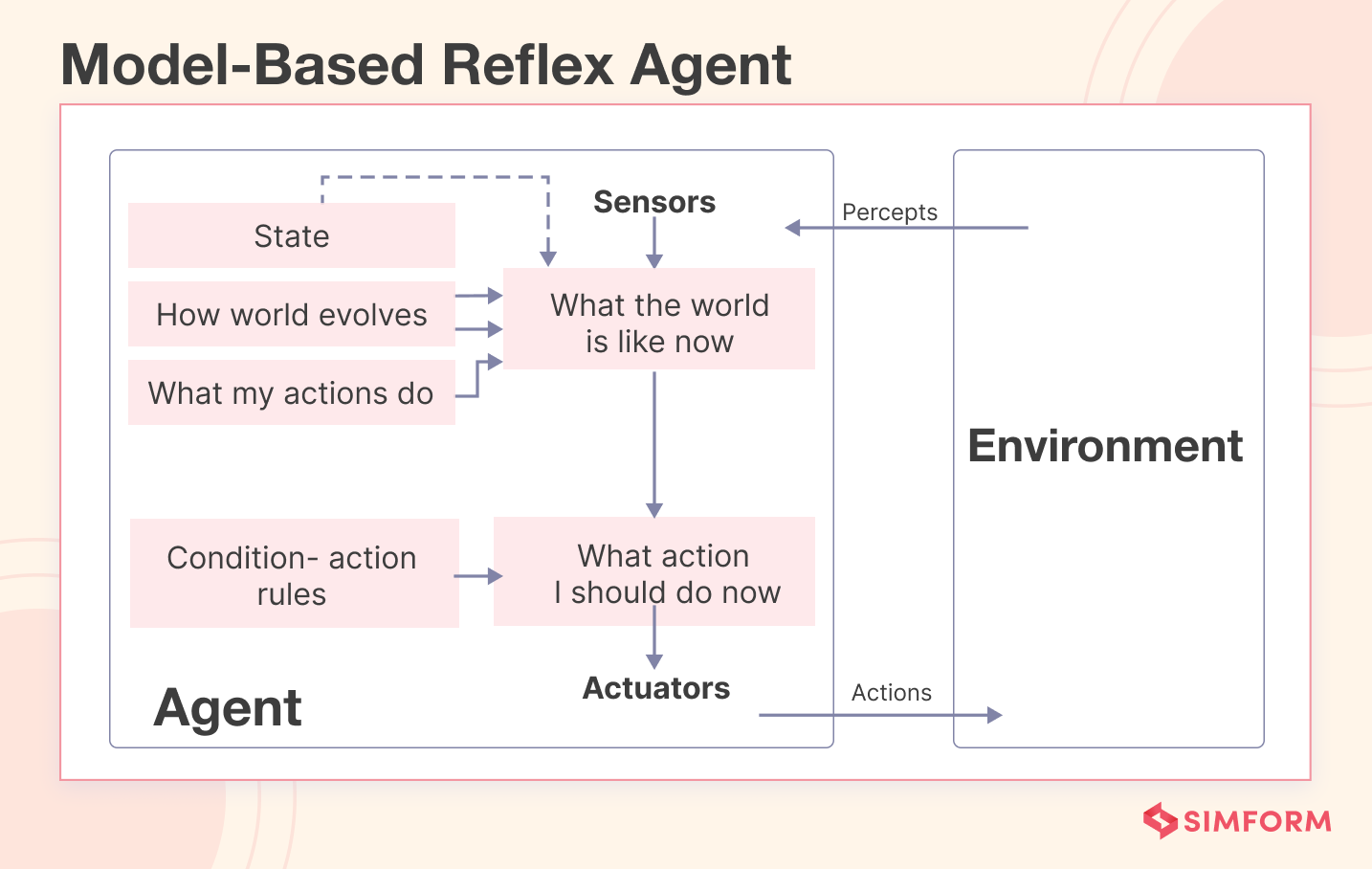

2. Model-based Reflex Agent

A model-based reflex performs actions based on a current percept and an internal state representing the unobservable word. It updates its internal state based on two factors:

- How the world evolves independently of the agent

- How does the agent’s action affect the world

A cautionary model-based reflex agent is a variant of a model-based reflex agent that also considers the possible consequences of its actions before executing them.

How does it work?

A model-based reflex agent follows the condition-action rule, which specifies the appropriate action to take in a given situation. But unlike a simple reflex agent, a model-based agent also employs its internal state to assess the condition during the decision and action process.

The model-based reflex agent operates in four stages:

- Sense: It perceives the current state of the world with its sensors.

- Model: It constructs an internal model of the world from what it sees.

- Reason: It uses its model of the world to decide how to act based on a set of predefined rules or heuristics.

- Act: The agent carries out the action that it has chosen.

Example

One of the finest examples of a cautionary model-based reflex agent is Amazon Bedrock.

Amazon Bedrock is a service that uses foundational models to simulate operations, gain insights, and make informed decisions for effective planning and optimization.

By relying on various models, Bedrock gains insights, predicts outcomes, and makes informed decisions. It continuously refines its models with real-world data, allowing it to adapt and optimize its operations.

Amazon Bedrock then plans for different scenarios and selects optimal strategies through simulations and adjustments to model parameters.

Advantages of model-based reflex agents

- Quick and efficient decision-making based on their understanding of the world

- Better equipped to make accurate decisions by constructing an internal model of the world

- Adaptability to changes in the environment by updating their internal models

- More informed and strategic choices by using its internal state and rules to determine the condition

Disadvantages of model-based reflex agents

- Building and maintaining models can be computationally expensive

- The models may not capture the real-world environment’s complexity very well

- Models cannot anticipate all potential situations that may arise

- Models need to be updated often to stay current

- Models may pose challenges in terms of interpretation and comprehension

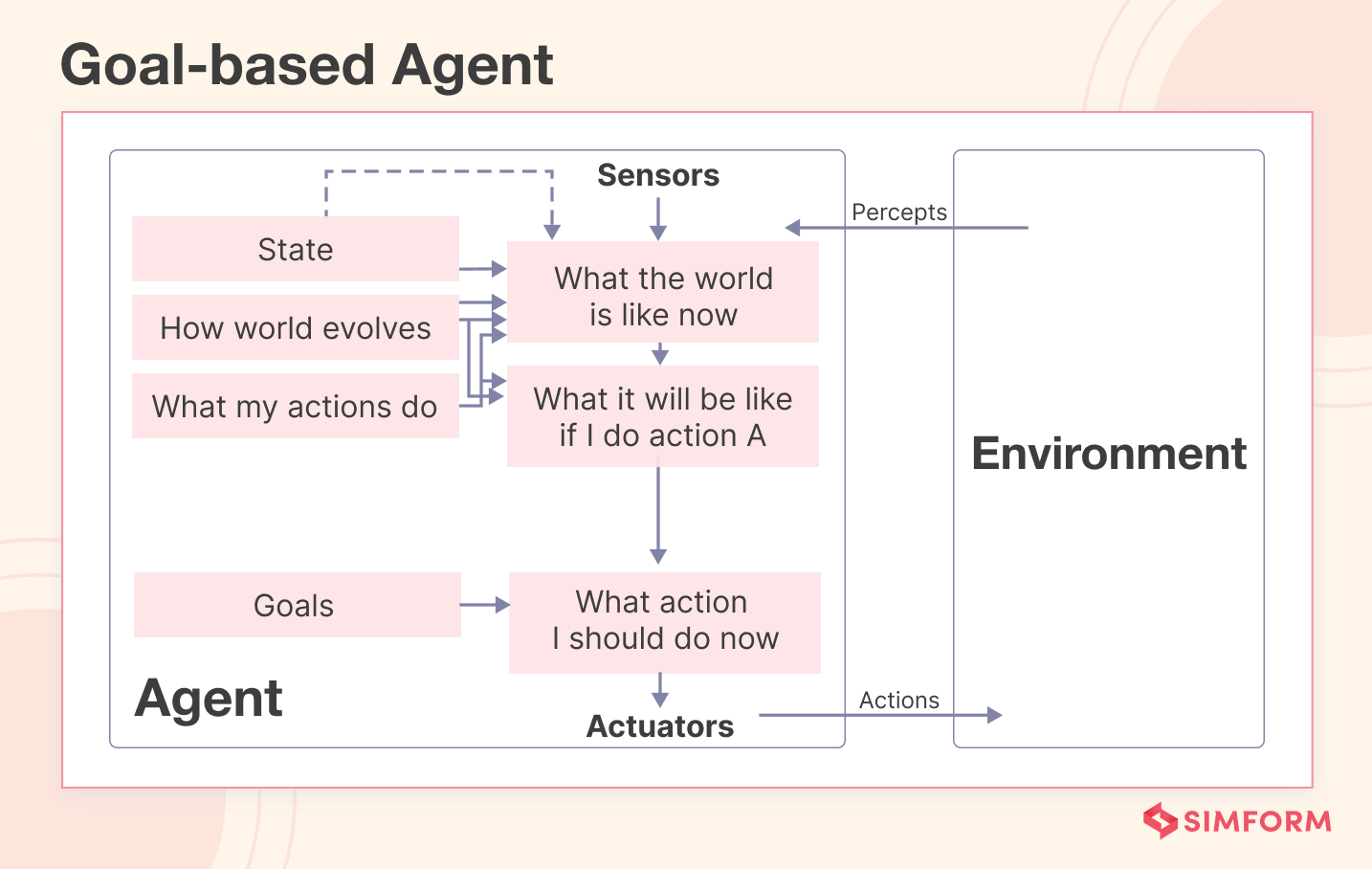

3. Goal-based Agents

Goal-based agents are AI agents that use information from their environment to achieve specific goals. They employ search algorithms to find the most efficient path towards their objectives within a given environment.

These agents are also known as rule-based agents, as they follow predefined rules to accomplish their goals and take specific actions based on certain conditions.

Goal-based agents are easy to design and can handle complex tasks. They can be used in various applications like robotics, computer vision, and natural language processing.

Unlike basic models, a goal-based agent can determine the optimal course of decision-making and action-taking processes depending on its desired outcome or goal.

How does it work?

Given a plan, a goal-based agent attempts to choose the best strategy to achieve the goals, It then uses search algorithms and heuristics to find the efficient path to the goal.

The working pattern of the goal-based agent can be divided into five steps:

- Perception: The agent perceives its environment using sensors or other input devices to collect information about its surroundings.

- Reasoning: The agent analyzes the information collected and decides on the best course of action to achieve its goal.

- Action: The agent takes actions to achieve its goal, such as moving or manipulating objects in the environment.

- Evaluation: After taking action, the agent evaluates its progress towards the goal and adjusts its actions, if necessary.

- Goal Completion: Once the agent has achieved its goal, it either stops working or begins working on a new goal.

Example

We can say that Google Gemini is a goal-based agent. No doubt, it is also a learning agent.

As a goal-based agent, it has a goal or objective to provide high-quality responses to user queries. It chooses its actions that are likely to assist users in finding the information they seek and achieving their desired goal of obtaining accurate and helpful responses.

Advantages of goal-based agents

- Simple to implement and understand

- Efficient for achieving a specific goal

- Easy to evaluate performance based on goal completion

- It can be combined with other AI techniques to create more advanced agents

- Well-suited for well-defined, structured environments

- It can be used for various applications, such as robotics, game AI, and autonomous vehicles.

Disadvantages of goal-based agents

- Limited to a specific goal

- Unable to adapt to changing environments

- Ineffective for complex tasks that have too many variables

- Requires significant domain knowledge to define goals

Explore the comprehensive Google Bard guide!

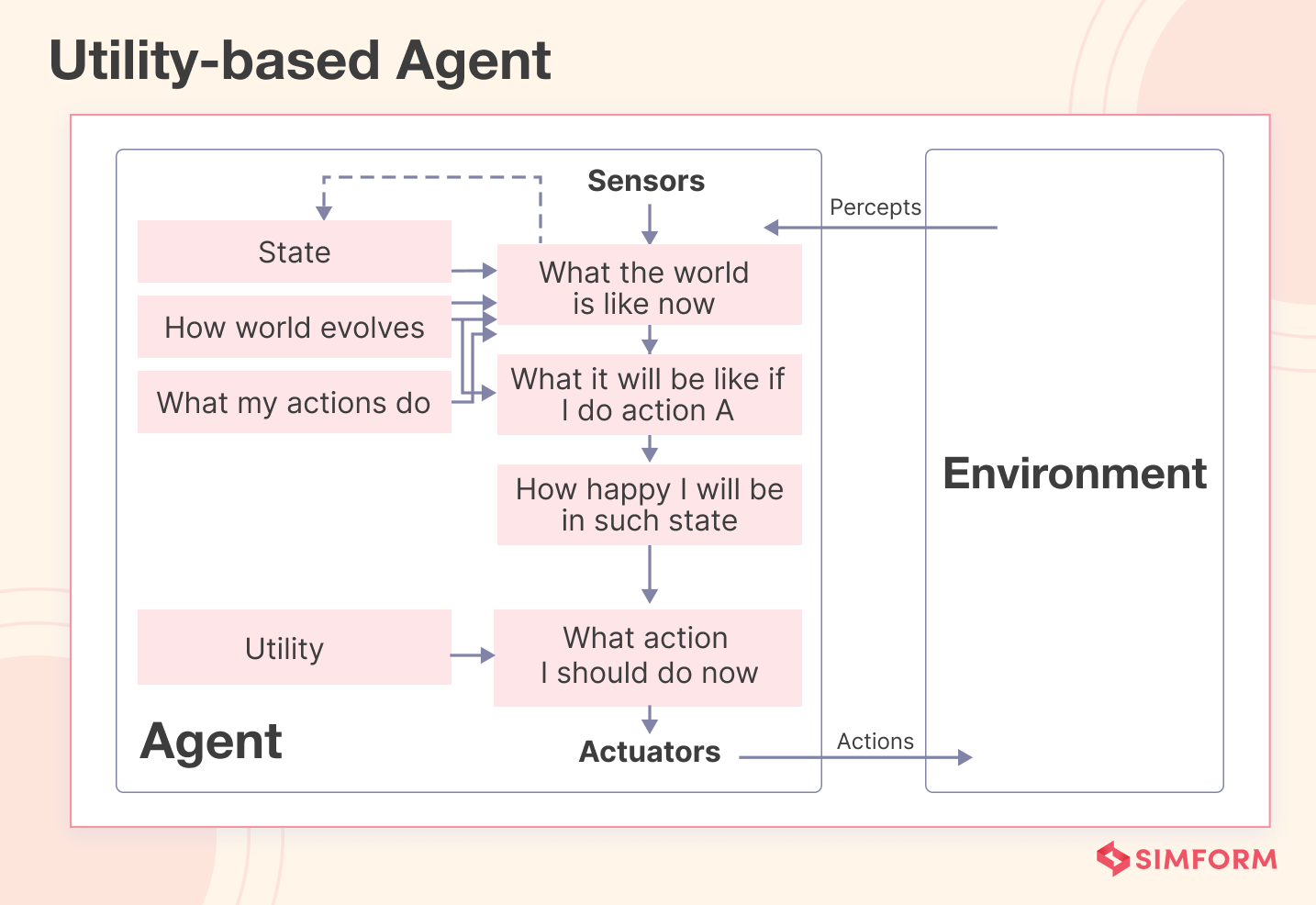

4. Utility-based Agents

Utility-based agents are AI agents that make decisions based on maximizing a utility function or value. They choose the action with the highest expected utility, which measures how good the outcome is.

This helps them deal with complex and uncertain situations more flexibly and adaptively. Utility-based agents are often used in applications where they have to compare and select among multiple options, such as resource allocation, scheduling, and game-playing.

How does it work?

A utility-based agent aims to choose actions that lead to a high utility state. To achieve this, it needs to model its environment, which can be simple or complex.

Then, it evaluates the expected utility of each possible outcome based on the probability distribution and the utility function.

Finally, it selects the action with the highest expected utility and repeats this process at each time step.

Example

Anthropic Claude, an AI tool whose goal is to help cardmembers maximize their rewards and benefits from using cards, is a utility-based agent.

Because to achieve its goal, it employs a utility function to assign numerical values representing success or happiness to different states (situations that cardmembers face, such as purchasing, paying bills, redeeming rewards, etc.). And then compares the outcome of different actions in each state and trade-off decisions based on their utility values.

Furthermore, it uses heuristics and AI techniques to simplify and improve decision-making.

Advantages of utility-based agents

- Handles a wide range of decision-making problems

- Learns from experience and adjusts their decision-making strategies

- Offers a consistent and objective framework for decision-making

Disadvantages of utility-based agents

- Requires an accurate model of the environment, failing to do so results in decision-making errors

- Computationally expensive and requires extensive calculations

- Does not consider moral or ethical considerations

- Difficult for humans to understand and validate

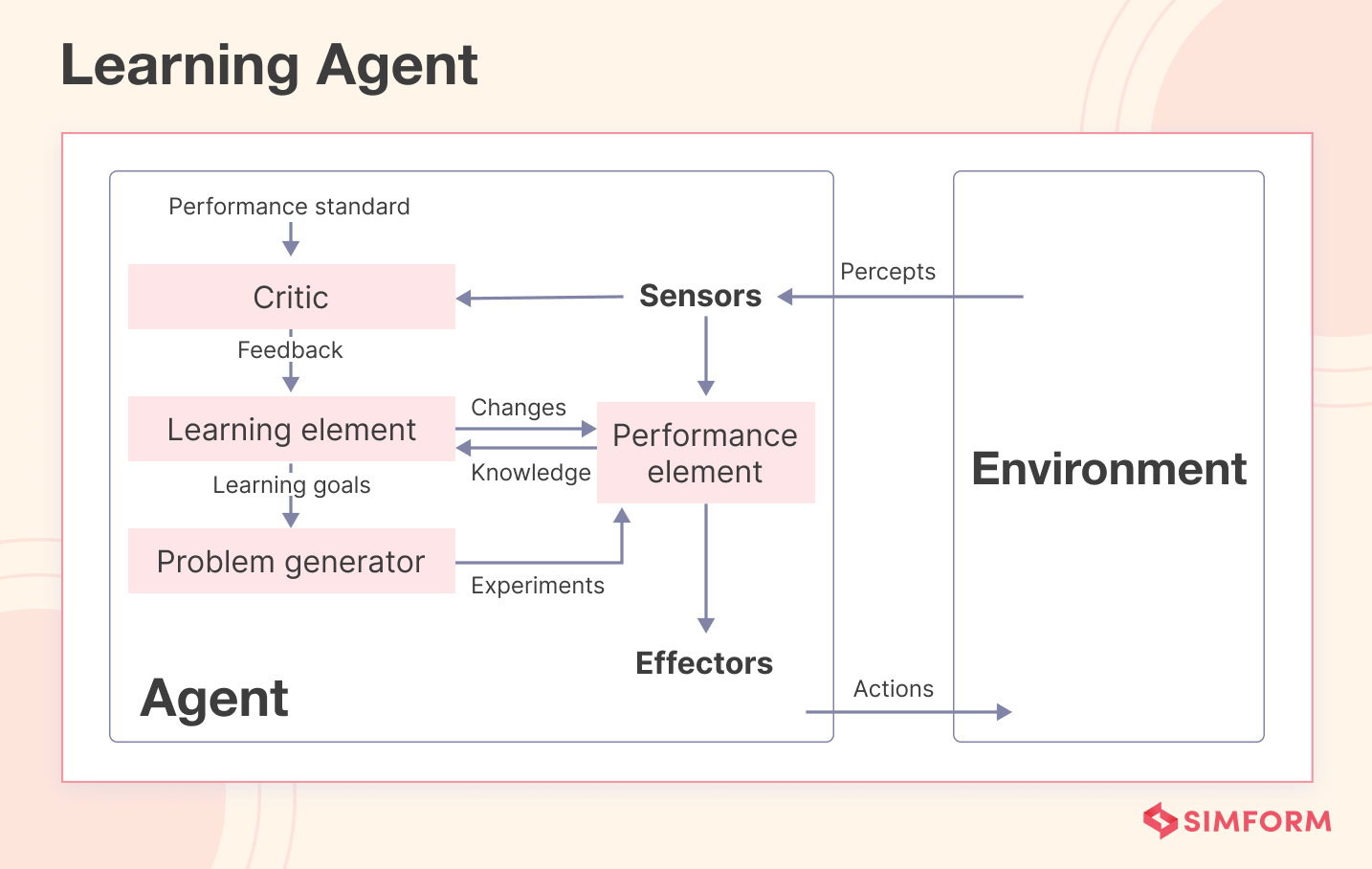

5. Learning Agents

An AI learning agent is a software agent that can learn from past experiences and improve its performance. It initially acts with basic knowledge and adapts automatically through machine learning.

The learning agent comprises four main components:

- Learning Element: It is responsible for learning and making improvements based on the experiences it gains from its environment.

- Critque: It provides feedback to the learning element by the agent’s performance for a predefined standard.

- Performance Element: It selects and executes external actions based on the information from the learning element and the critic.

- Problem Generator: It suggests actions to create new and informative experiences for the learning element to improve its performance.

How does it work?

AI learning agents follow a cycle of observing, learning, and acting based on feedback. They interact with their environment, learn from feedback, and modify their behavior for future interactions.

Here’s how the cycle works:

- Observation: The learning agent observes its environment through sensors or other inputs.

- Learning: The agent analyzes data using algorithms and statistical models, learning from feedback on its actions and performance.

- Action: Based on what it has learned, the agent acts in its environment to decide how to behave.

- Feedback: The agent receives feedback about their actions and performance through rewards, penalties, or environmental cues.

- Adaptation: Using feedback, the agent changes its behavior and decision-making processes, updating its knowledge and adapting to its environment.

This cycle repeats over time, allowing the agent to continuously improve its performance and adapt to changing circumstances.

Example

A good example of a learning agent program is AutoGPT, created by Significant Gravitas.

Imagine you want to purchase a smartphone. So, you give AutoGPT a prompt to conduct market research on the top ten smartphones, providing insights on their pros and cons.

Once given this task, AutoGPT analyzes the pros and cons of the top ten smartphones by exploring various websites and sources. It evaluates the authenticity of websites using a sub-agent program. Finally, it generates a detailed report summarizing the findings and listing the pros and cons of the top ten smartphone companies.

Advantages of learning agents

- The agent can convert ideas into action based on AI decisions

- Learning intelligent agents can follow basic commands, like spoken instructions, to perform tasks

- Unlike classic agents that perform predefined actions, learning agents can evolve with time

- AI agents consider utility measurements, making them more realistic

Disadvantages of learning agents

- Prone to biased or incorrect decision-making

- High development and maintenance costs

- Requires significant computing resources

- Dependence on large amounts of data

- Lack of human-like intuition and creativity

6. Hierarchical Agents

Hierarchical agents are structured in a hierarchy, with high-level agents overseeing lower-level agents. However, the levels may differ based on the complexity of the system.

Hierarchical agents are useful in various applications such as robotics, manufacturing, and transportation. They excel in coordinating and prioritizing multiple tasks and sub-tasks.

How does it work?

Hierarchical agents work just like a corporate organization. They organize tasks in a structured hierarchy consisting of different levels, wherein higher-level agents supervise and decompose goals into smaller tasks.

Subsequently, lower-level agents execute these tasks and provide progress reports.

In the case of complex systems, there may be intermediate-level agents who coordinate the activities of lower-level agents with higher ones.

Example

UniPi, by Google, is an innovative hierarchical AI agent that utilizes text and video as a universal interface, enabling it to learn diverse tasks across various environments.

UniPi comprises a high-level policy that generates instructions and demonstrations and a low-level policy that executes tasks. The high-level policy adapts to various environments and tasks, while the low-level policy learns through imitation and reinforcement learning.

This hierarchical setup enables UniPi to combine high-level reasoning and low-level execution effectively.

Advantages of hierarchical agents

- Hierarchical agents offer resource efficiency by assigning tasks to the most suitable agents and avoiding duplication of effort.

- The hierarchical structure enhances communication by establishing clear lines of authority and direction.

- Hierarchical Reinforcement Learning (HRL) improves agent decision-making by reducing action complexity and enhancing exploration. It employs high-level actions to simplify the problem and facilitate agent learning.

- Hierarchical decomposition offers the benefit of minimizing computational complexity by representing the overall problem more concisely and reusable.

Disadvantages of hierarchical agents

- Complexity arises when using hierarchies for problem-solving.

- Fixed hierarchies limit adaptability in changing or uncertain environments, hindering the agent’s ability to adjust or find alternatives.

- Hierarchical agents follow a top-down control flow, which can cause bottlenecks and delays even if lower-level tasks are ready.

- Hierarchies may lack reusability across different problem domains, requiring the time-consuming and expertise-dependent creation of new hierarchies for each domain.

- Training hierarchical agents is challenging due to the need for labeled training data and careful algorithmic design. Applying standard machine learning techniques to improve performance becomes difficult due to the complexity involved.

Real-life examples of AI agents

A few real-life examples of AI agents showcase AI’s diverse applications, including natural language processing and robotics. By exploring these examples, you can gain a deeper understanding of how AI is transforming industries and improving our daily lives:

- Klarna’s AI Assistant: Klarna reported its AI assistant handled two-thirds of customer service chats in its first month, covering issues like refunds, returns, payments, and cancellations.

- Intel “Ask Intel”: Intel launched an AI support assistant built with Microsoft Copilot Studio that can open and update support cases, check warranty status, provide troubleshooting steps, and escalate to humans when needed.

- Eneco: Eneco built a multilingual AI agent on Microsoft Copilot Studio and deployed it on its website in about three months to improve customer support at scale.

- Oscar Health member-facing chatbots : Oscar deployed integrated chatbots to answer benefits and cost questions and help members navigate care. The platform answers 58% of benefits questions instantly and handles 39% of benefits messages without human escalation.

- Lowe’s Mylow and Mylow Companion (2025): Lowe’s deployed these agents to provide home-improvement guidance online and to store associates across 1,700+ stores. These assistants handle nearly 1 million questions per month, the online conversion rate more than doubles when customers engage with Mylow, and customer satisfaction scores increased 200 basis points when associates use Mylow Companion.

Conclusion

AI agents are becoming more capable, but the biggest shift in 2026 is not autonomy. It is reliability. Teams need agents that can take useful actions inside real workflows while staying constrained, testable, and observable.

Different agent types fit different environments. Reflex agents work for stable rules. Goal and utility agents help with planning and trade-offs. Learning and hierarchical agents can handle complexity, but they also introduce higher operating risk if feedback loops and guardrails are weak.

If you are adopting agents in an enterprise, the winning approach is usually incremental. Start with narrow workflows, instrument them well, add permissions cautiously, and scale only after the system proves it can operate safely.

Kalpesh patil

Great article loved it. This was better than what is give in books.

Nikisha

Thank you for appreciating our article