A lot has changed since I started building apps, we previously used to almost rebuild a server 15 years back, then came cloud infrastructure services using which we could simply get pre-configured connected servers to get started with. It has changed how the custom software development process used to be.

However, it still required us to write a lot of code (for good).

In 2016, something called Serverless came up.

Serverless is a cloud technology where we don’t actually have to write a lot of custom code, define communication protocols, install server configurations, etc. We will take a short intro to serverless a few sections later, but for now, I would really want to emphasize why this is extremely important.

So, I was working with this fortune 500 client that wanted to build a chat solution and make it production ready within 25 days.

Writing a chat service and integrating other services with it is just one part, we can easily do that within 15 days. What was actually difficult – is to ensure that the we met service level agreements and have everything setup properly to cater 100 business executives collaborating across the globe with performance.

Now, if you were in my shoes and want to build a chat app without waiting for 3-4 months, this blog post is for you.

Why I chose Serverless for Real Time Chat App?

So far we have talked about the ease of deployment and speed using serverless, which is way faster than Serverless environment.

When I was working for Fortune 500, project over costing and time consideration were the vital and deciding factors in building an app. Time overrun can cost you twice or thrice the cost of project.

Why this isn’t the concern while using serverless?

Serverless gives you the liberty to work on managed infrastructure which drastically reduces labour cost and provide flexibility to deploy your vital resources to some other task.

No need to manage servers from now on and rather focus on building new features to your app.

Another concern for startups while building MVP is the scaling factor. Scalability is the race against time.

GoChat, chatting app for Pokemon Go fans, is the best example of scalability failure. It was built as an MVP without taking about scalability in mind.

It went as high as 1 million users in 5 days and went down the very next day.

Building a scalable app requires experience as well as resources.

Using serverless you can deploy pay as you go model which can be scaled to more than 1 million users in less than 24 hrs in a cost efficient way.

What Are Serverless Architectures?

While building and running an application, there’s undifferentiated heavy lifting, such as installing software, managing server, coordinating patch schedule and scaling to meet demand.

Serverless architectures allow you to build and run applications and services without having to manage infrastructure.

Your application still runs on serverless but all the server management for you is done by Azure.

Over time, many event triggers have been introduced for Azure Functions. Using services such as Azure Event Grid, developers can respond to events and API calls by invoking serverless functions. This makes it easier to build event-driven workflows and enrich applications with additional functionality without managing underlying infrastructure.

Baseline Chat App Architecture

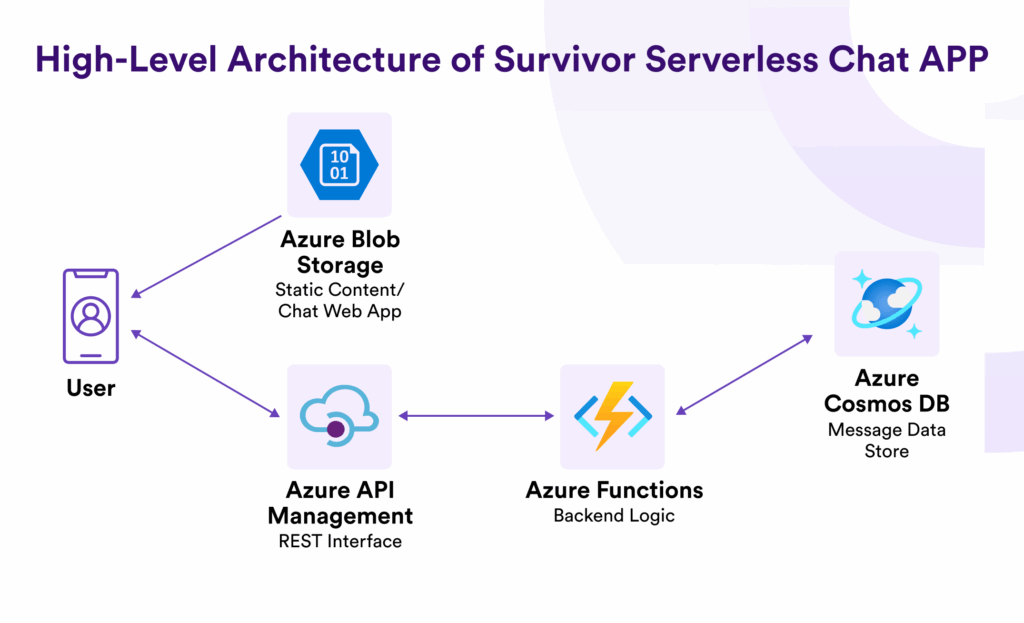

The chat application we will be building represents a complete serverless architecture that deliver a baseline chat application upon which additional functionality can be added.

The high level architecture of component that are launched automatically:

A storage container in Azure Blob Storage is created to store the static web page content of the chat application. The web browser downloads static content such as HTML, CSS, and JavaScript files from this web-facing repository. To improve performance and optimize delivery, this content can be distributed through a CDN such as Azure Content Delivery Network.

The JavaScript running on the client side in the browser calls backend APIs that are implemented using Azure Functions and exposed as web APIs through Azure API Management.

These functions are designed to be stateless and rely on a persistence layer to read and write data. For this, a fully managed database service such as Azure Cosmos DB is used to store chat messages and application data.

Serverless functions can also be triggered by various events. Using services such as Azure Event Grid, developers can respond to events and API calls by automatically invoking functions in an event-driven architecture.

There are also several variations of this architecture. For example, Azure API Management can directly connect APIs with other Azure services or event streams such as Azure Event Hubs, enabling scalable data ingestion and event-driven processing.

Once the infrastructure components are deployed using Azure infrastructure automation such as Azure Resource Manager templates, the result is a fully functioning chat application hosted in Azure Blob Storage, with Azure API Management and Azure Functions processing requests and Azure Cosmos DB serving as the persistent data store for chat messages.

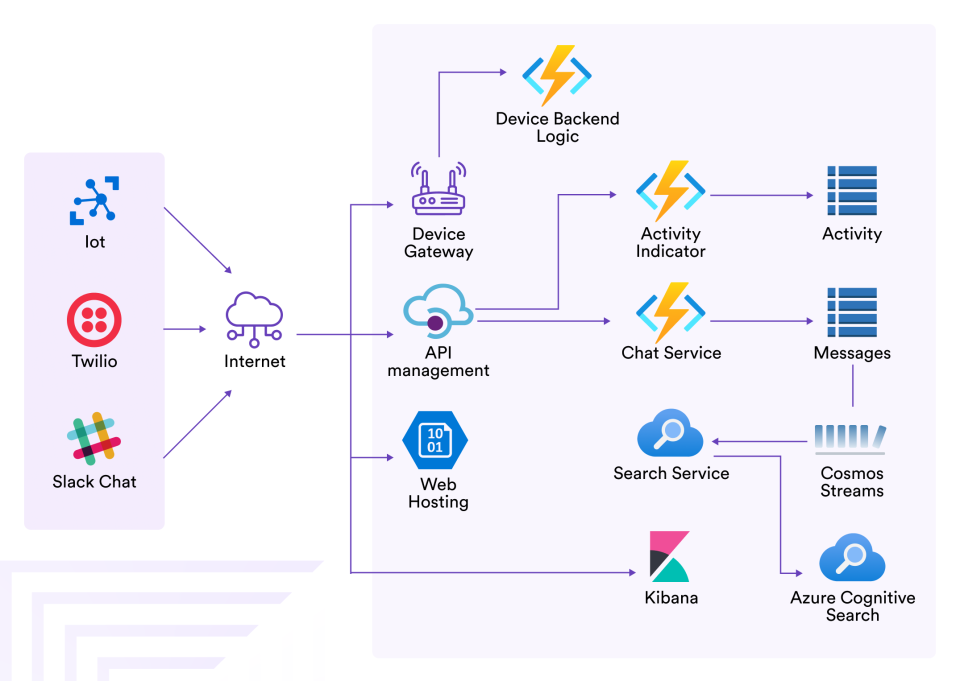

With this baseline chat application, additional functionality can be added, including:

- Integration of SMS/MMS via Twilio to send messages to the chat from SMS

- A “help me” panic button integrated with IoT devices

- Integration with Slack for receiving messages from another platform

- Typing indicators to show which users are actively typing

Best Practices for Improving the Chat App

For most part, the design pattern you would see in server-yes environment you would also find in serverless environment. With that said, it never hurts to revisit best practices while learning new ones. So lets review some key patterns we incorporated in our serverless chat application.

Decoupling your app

In our chat application, Lambda function is serving our business logic. Since user interact with Lambda at function level, it serves you well to split up logic at each functional level, so you can scale the logic independently from the source and destination from which it serves.

Separating your data stores

Treat each data store as an isolated component of every service it support. One common pitfall when working with microservice is to forget about the data layer. By keeping data store specific for each service provided, you can better manage the resources at data layer specifically for the services.

Leverage Data Transformations up the Stack

While designing a website you need to care about data transformation and compatibility. How will you handle data from different clients, systems, users for services?

Are you going to run different flavors of your environment for each incoming request?

Absolutely not !

With API gateways, transformation become a lot easier with their built-in mapping templates. With these resources you can built data transformation and mapping logic into API layer for request and responses. This result in less work as API gateway is a managed service.

Security Through Service Isolation and Least Privilege

In this application, permissions can be managed using Microsoft Entra ID and Azure Role-Based Access Control. These services allow administrators to define fine-grained access policies so that each service or component only receives the permissions it needs to operate.

Let's Develop a Serverless Real Time Chat App Together!

Conclusion

This is a change from how we build server-side application before, and with it comes the significant restructuring. Working on serverless will also require us to restructure our monitoring, but we will get on with it with time.

Auto scaling and auto-provisioning saves labor cost because lots of resource management activities we no need to perform by ourselves. This is particularly great when we start to deploy product with reduction in time-to-market.

Auto scaling combined with usage cost performs fairly well in terms of cost of infrastructure for serverless.

Although, serverless architecture does not provide performance capabilities in terms of host or count, it offers alternative ways to configure performance requirements and I expect that such configurations will expand in future.

alex hernandez

What if I wanted to create a PTT/voice/chat application to run on a private network without any internet connectivity?? Is this something you could help with?