I am a big fan of Geoffrey Moore’s book Crossing the chasm and Inside the Tornado. If you look at the life cycle of Cloud it more or less over the Chasm, in to the bowling alley and heading for the mainstream.

Just type “Cloud computing” in Google and what you get?

You will meet with too many mixed messages, unclear definitions and not enough clarity on its business benefits.

Moreover, if you must have visited any of the general trade show to learn about the topic might be left with the impression that cloud computing is all about infrastructure.

Well, this is not so. There is much more to it.

I’ve lived through several major disruptions in my 15 years of career in the industry, from mainframe to computers, to the birth of new IBM PC. Right now three significant disruptions are happening at the same time- rise of mobile, social and moving to new computing paradigm i.e. cloud.

Gartner has predicted cloud adoption to increase significantly over the next four years with cloud deployment of software gradually becoming the default.

Cloud app service implementation has become common in all sized organizations. Most companies are making a move to transform their business operations by adopting cloud. Present day CTOs are responsible for migrating the application and system to cloud. While cloud computing presents many benefits such as operational flexibility, reduced capital expenditure, and process control; its implementation is difficult and requires technical proficiency.

Let’s take a look at some of the prominent challenges faced by a CTO in cloud implementation.

Consult Simform’s cloud consulting services to accelerate your cloud journey and utilize a broader range of cloud services. Contact us today if you’re looking for an extended tech team!

How Can Diverse Legacy Systems be Integrated with Existing Cloud Architectures?

Many legacy systems are adamant. They are tightly coupled with their infrastructure and have other constraints like designing and building APIs which restrict them for easy migration to the cloud. In some verticals like healthcare, there is a chance of failure to properly address security issues when integrating legacy systems into the cloud, eventually making the system more complex. Moreover, protocols are another barrier for CTOs as existing apps use different protocols for network-attached-storage vs. object storage.

What Role does Technical Debt Play in Cloud Implementation Challenges?

Predicting and quantifying promptly the technical debt has turned into an issue of significant importance over recent years. It is quite difficult to identify the technical debt in the cloud marketplace, where cloud services can be leased. An efficient utilization of cloud services requires the adoption of DevOps practices. Most of the cloud providers support DevOps on their platforms in the form of continuous integration and continuous deployment tools.

A DevOps implementation is not a clean slate. If an organization is moving from traditional ways of operations to DevOps, there are lot of opportunities for current practices to add themselves into the mix. Those practices, along with the workarounds required to accommodate them, will all be technical debt. It is not necessary that all of the debt that is embodied in the application code which can be easily eliminated – or that will travel through the pipeline without causing any problems. When an application interacts with several things like databases, users, operating systems – and even in a virtualized space, technical debt may affect those interactions.

How do Cloud Sprawl and Vendor Lock-in Affect Cloud Strategy?

The problem is similar to what happened with virtualization where there was wide adoption but CTOs were not able to manage the workload and instances. Similarly, when an organization fails to monitor cloud instances it leads to cloud sprawl. An unused public cloud instance created to test a software will increase the cloud spending. Cloud providers are not yet fully interoperable, so a business that uses different cloud providers may face incompatible APIs and data consistency challenges.

Growing number of cloud providers also attribute to the cloud sprawl. If there is no cloud governance and policies departments within an organization may use different cloud solutions. For example, software developers may use AWS for compute and storage instances, while a research and development group might use Google cloud resources for big data projects. Thus, during cloud implementation, CTOs are often faced with data consistency challenges and incompatible APIs due to non-interoperability of different cloud providers.

By adopting the wrong cloud provider, companies can get locked into an expensive and painful ordeal. Some cloud providers have strict severance clauses that make it nearly impossible to switch to another provider. Many cloud providers don’t clearly disclose their policies about how they protect and secure data both at rest and in flight.

Which Cloud Delivery Model— Public, Private, or Hybrid—Is Best for Your Organization?

When moving to the cloud, CTOs are faced with an array of questions like cloud cost, organization’s needs, security, privacy, integration and scalability that can make it difficult to map out a clear implementation strategy.

Due to lack of knowledge about proper needs of an organization and missing services in every cloud services make it difficult for CTOs to choose cloud type between Public, Private and Hybrid.

Even after adopting the best suited cloud model, a lack of mature and open computing standards for data interoperability create hurdles in cloud adoption. Each technology comes with the risk of cloud provider lock-in that creates roadblocks to easily moving data into and out of the cloud. Lock-in is the one who has the ability to obstruct interoperability and portability, so it is not that straight for organizations to take advantage of the many proven benefits of cloud computing.

How does a Shortage of Cloud Skills Impact Cloud Adoption?

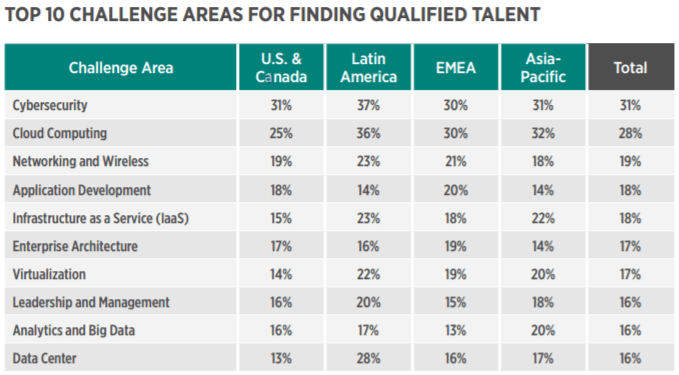

Lack of employees with cloud computing skills is one of the most challenging factors for CTOs in cloud implementation. 60% of engineering firms accepted that a lack of security skills is slowing down their cloud adoption plans. The following is an analysis of how a shortage of security skills is affecting cloud adoption in organizations.

According to Global Knowledge’s IT skill report of 2017, cloud computing is second in a challenging hiring area as well 28% selecting it.

What Challenges Arise from Lacking Integrated Cross-platform Management in Cloud Environments?

For most organizations, enterprise IT systems often comprise multiple databases all selecting different technologies and services. While building an on premise set up it’s easy to integrate all the components of different databases because they are situated on the same infrastructure. In enterprise deployments, CTOs face difficulty to manage integrated cross-platform which may undergo rigorous due diligence.

Why is the Absence of Orchestration Tools a Hurdle in Cloud Implementation?

Cloud infrastructure requires orchestration of multiple components to achieve true elasticity. Orchestration refers to the consolidation of a process or workflow which is achieved by arranging and coordinating automated tasks. Presently there are very few all encompassing orchestration tools. The proprietary orchestration tools provides high touch and custom coding but require lock-in. These fail to perform complete automation of all the hybrid cloud components. Whereas the open source tools are evolving rapidly.

According the report “Journey to the Cloud” from KPMG – Since the market for orchestration solutions is immature and no single orchestration product is a silver bullet, it recommends developing a consumption platform instead. A consumption platform is a holistic set of capabilities for multi-modal service consumption (independent of deployment model).

What is the Importance of Cloud Governance in Successful Cloud Adoption?

There are specific security challenges that CTOs face when moving to a Cloud. However, one of the key challenges that is not always addressed is governance. It can be managed effectively by considering 5 issues around cloud computing which are privacy, transparency, trans-border information flow, compliance and certification.

Privacy must be imperative for providers to prove to customers that privacy controls are in place and demonstrate ability to prevent, detect and react to security breaches in a timely manner. For Transparency providers must assure customers their information is properly secured against unauthorized access, destruction and future updates. Trans-border information flow must be addressed because the physical location of the information can become an issue if an information can be stored anywhere in the cloud. Compliance is another issue in cloud governance. It means that data may not be stored in first place and may not be easily retrievable. Certification is the last issue in which Cloud providers have to provide assurance to customers that they are doing the “right” things.

How to Choose the Right Cloud Deployment Model

How do Concerns Over Business Continuity and Disaster Recovery Influence Cloud Strategies?

Business Continuity:

A typical business continuity plan requires the efforts of a two- or three-person team having the working of the business, its IT infrastructure and its management. It takes a time to prepare the necessary documents, and to secure buy-in and funding from stakeholders and executives.

The challenge for CTOs is that today’s cloud-based technologies cannot help with the planning and preparation part of business continuity. The RTO (return to operation) aspect is where cloud-based technology mainly boosts its implementation and execution.

Disaster Recovery:

Many CTOs use Disaster recovery (DR) which is a backup and restore strategy as a security measure. But, According to Rachel Dines- DR analyst at Cambridge, Cloud DR vendors do not have complete system redundancy. Also, Suppliers cannot give grounds for the cost of building data centers that imitate every customer’s infrastructure setups.

If a major manmade or natural disaster occurred, DR site cannot run every DRaaS users’ applications. Since the IT organizations at stake would only find that out when the disaster occurred, there is a greater degree of risk to disaster recovery as a service than traditional DR builds. Cloud-based DR also increases enterprise network bandwidth needs. DR as a service stores copies of applications and VM(virtual machine) images in the provider’s cloud. Due to continuous upgradation of these apps and VM images, information transfers from the enterprise production site to the DRaaS supplier’s data center. This load may strain available bandwidth. DRaaS works well with simple apps but may reduce network performance with process-intensive systems, such as CRM and enterprise resource planning applications.

What does Service Enablement Entail in the Context of Cloud Computing?

CTOs find service enablement as a big challenge in cloud implementation because there is a lack of standardization within the infrastructure domain.

Today many components are enabled with a control plane API or SDK and have standardized on XML and web services. But the depth and breadth of these access methods vary widely and often require skills not commonly found in IT operations today. These APIs and SDKs are often very granular and specific to the infrastructure component technology. Common operational tasks may require multiple API calls, with each infrastructure component requiring a different set of calls with its own unique terminology.

Modernize your business through cloud based solutions

How does Intercloud Architecture Facilitate Multi-cloud Strategies?

For some organizations the end goal is a hybrid cloud architecture, one in which public cloud resources are integrated into data center management and infrastructure systems to enable cost reduction, elasticity, and flexibility. The realization of multi-clouds is materialized through internal clouds and interactions between public – private clouds. This process requires knowledge of cloud architecture. Apart from that security, trust and legal compliance issues still act as hurdles for a wider uptake.

What are the Implications of Transitioning from Static to Dynamic Network Architectures in the Cloud?

Virtualization is an essential part of cloud infrastructure. In server infrastructure, virtualization was incorporated to meet the challenges of elasticity and portability. Now in cloud the focus shifts towards the virtualization of the network. Such a transition has many challenges unlike those experienced during the server virtualization phase.

Most serious amongst these challenges is the impact of moving from primarily static to dynamic network architectures. Even though the infrastructure changes from static (physical) to dynamic (virtual), it requires the same functional components. The overall network architecture should have firewall, load balancing and security services.

The solution to this challenge lies with existing solutions for managing dynamism in the server infrastructure. CIOs can overcome this challenge by adding an application delivery tier. It is an abstraction layer that provides strategic control for virtualized application services. It provides an ability to manage virtualized network services and benefit from resource utilization and increased elasticity.

How do Data Loss and Privacy Risks Pose Challenges in Cloud Implementation?

Data loss is result of inefficient migration of data from legacy system to cloud infrastructure. Data can be permanently lost due to malicious attackers or from deletion by a cloud service provider, either through negligence or disaster. If encryption is being used on data and the encryption key is lost, the data is also lost.

Security Breaches and frequency of attacks are increasing and getting more and more sophisticated. Even after using strong encryption, organizations are still being breached causing them financial damage and acute reputation loss. Many cloud service providers do not properly encrypt their data and key management. Moreover, most cloud providers reside their encryption decryption keys on the server, making it easy for snoopers to sniff out the confidential information. Most businesses fail to be compliant against institutions holding confidential data, including customer and employee information because of the weak government regulations and compliance. In recent years, the bring your own device (BYOD) trend has become popular to business which allows employees to remotely access data from any location and time. Most used devices for BYOD are laptops, smartphones and tablets, which are more vulnerable to attack than desktop computers.

Concluding Thoughts

It is important to give serious consideration to these challenges and the possible ways out before adopting the cloud technology. Organizations should take an active role in adhering to industry standards in order to promote the growth of cloud computing. After understanding above challenges, CTOs can chart a less perilous path toward successful cloud migration and implementation.