When Google’s Web Server team introduced a unit testing culture, they determined that no code or code review should be accepted without accompanying unit tests. This made an incredible difference, and they were able to reap numerous benefits from the practice. It geared up their development speed, increased test coverage, and significantly reduced the number of defects, production rollbacks, and emergency releases.

Overall, unit testing disciplined the entire team and remained as concrete documentation for new members joining the team. No wonder unit testing is adopted by many organizations to prevent buggy code and enhance software quality.

However, organizations may fail to get the desired output from the unit testing process if not done right. This blog introduces you to some best ways to integrate unit testing in your development and testing cycle so that you can reap the most benefits of this essential testing strategy.

What is Unit Testing?

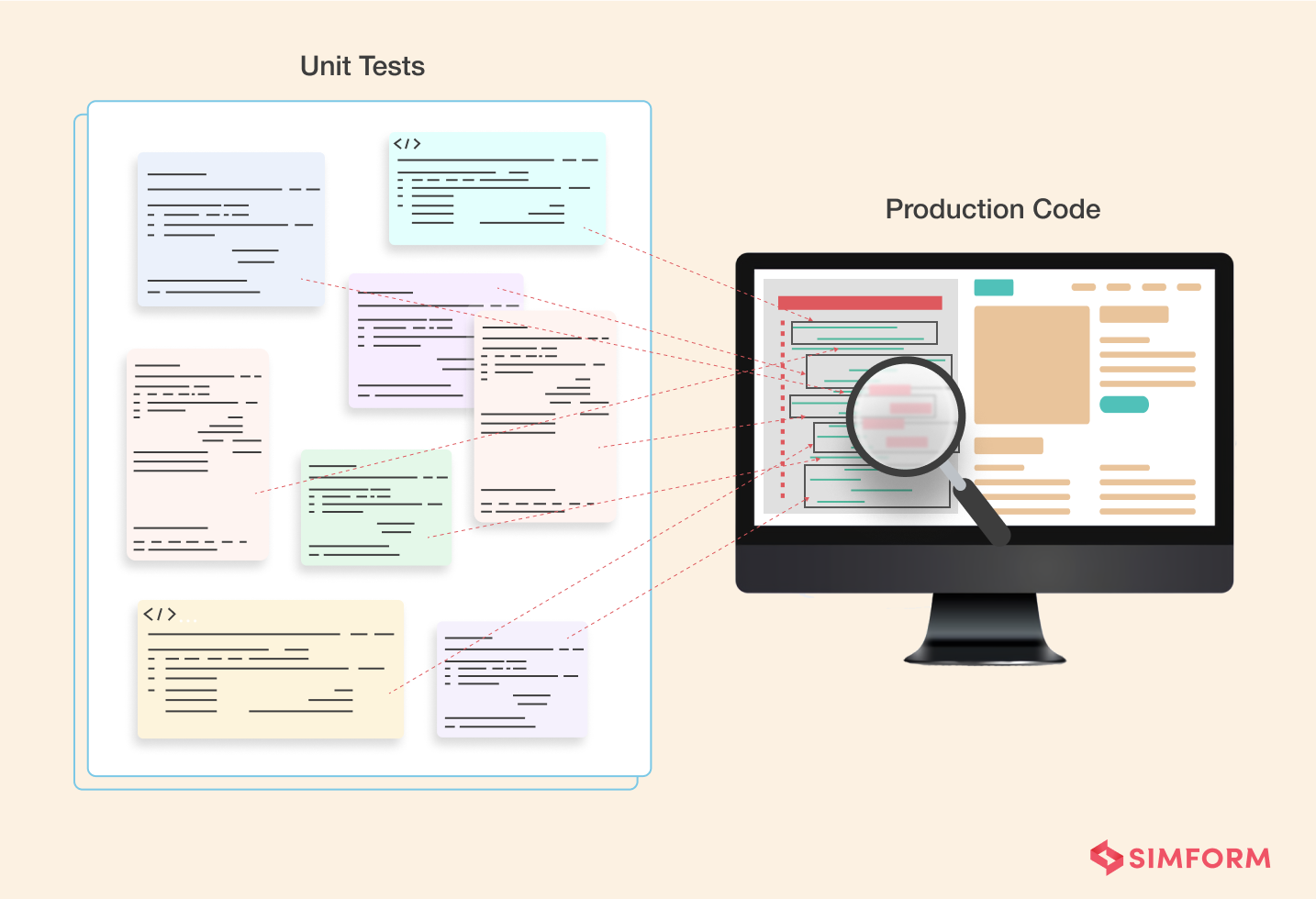

Unit testing is a type of testing in which one segregates the code into the smallest, testable units that can be logically isolated from the program. These units are then tested individually to check if each performs as expected. The small units make it easier to design tests, execute, record, and analyze results than larger chunks of code. It allows you to locate the errors quickly and fix them early in the development cycle. Unit tests are types of functional tests that are written and run by software developers.

There is no defined scope of what constitutes a “unit”; it is situational and is up to the team or developer to decide. For instance, in object-oriented programming, a whole class or interface is treated as a unit. But in procedural programming, an individual function might be treated as a unit. The ultimate purpose of unit testing in software engineering is to validate and compare the actual behavior with the expected behavior of the software components.

However, unit testing can be costly and require a lot of training, experience, effort in building and maintaining the tests. Also, it might not be ideal for implementing in projects of all sizes. For instance, in a project with a two-month timeline, it is unfeasible to spend a month getting automation testing ready.

But fret not! Unit testing as part of daily development can prove valuable and valuable for your organization if done right. But first, know the role of unit testing in your SDLC and match your unit test with the characteristics mentioned below to check if yours is the ideal unit test.

Role of Unit Testing

- Provides early, rapid and continuous feedback in SDLC.

- Offers super-precise feedback with their laser-sharp focus.

- Ensures quality standards are met before deploying the product.

- Created by software engineers to verify how units work when completely isolated. Developers use test doubles like stubs, mock objects, etc., to replace the missing parts in a test module for isolated testing.

- It enables continuous testing in app modules without any hassles of dealing with external services or dependencies.

- Overall, it provides developers with a reliable engineering environment where efficiency, productivity, and quality code are paramount.

Pro tip: Since unit testing doesn’t check if different units work well with each other or with dependencies, you need to implement it with an ideal mix of other tests to ensure the software works perfectly as a whole.

Characteristics of A Good Unit Test

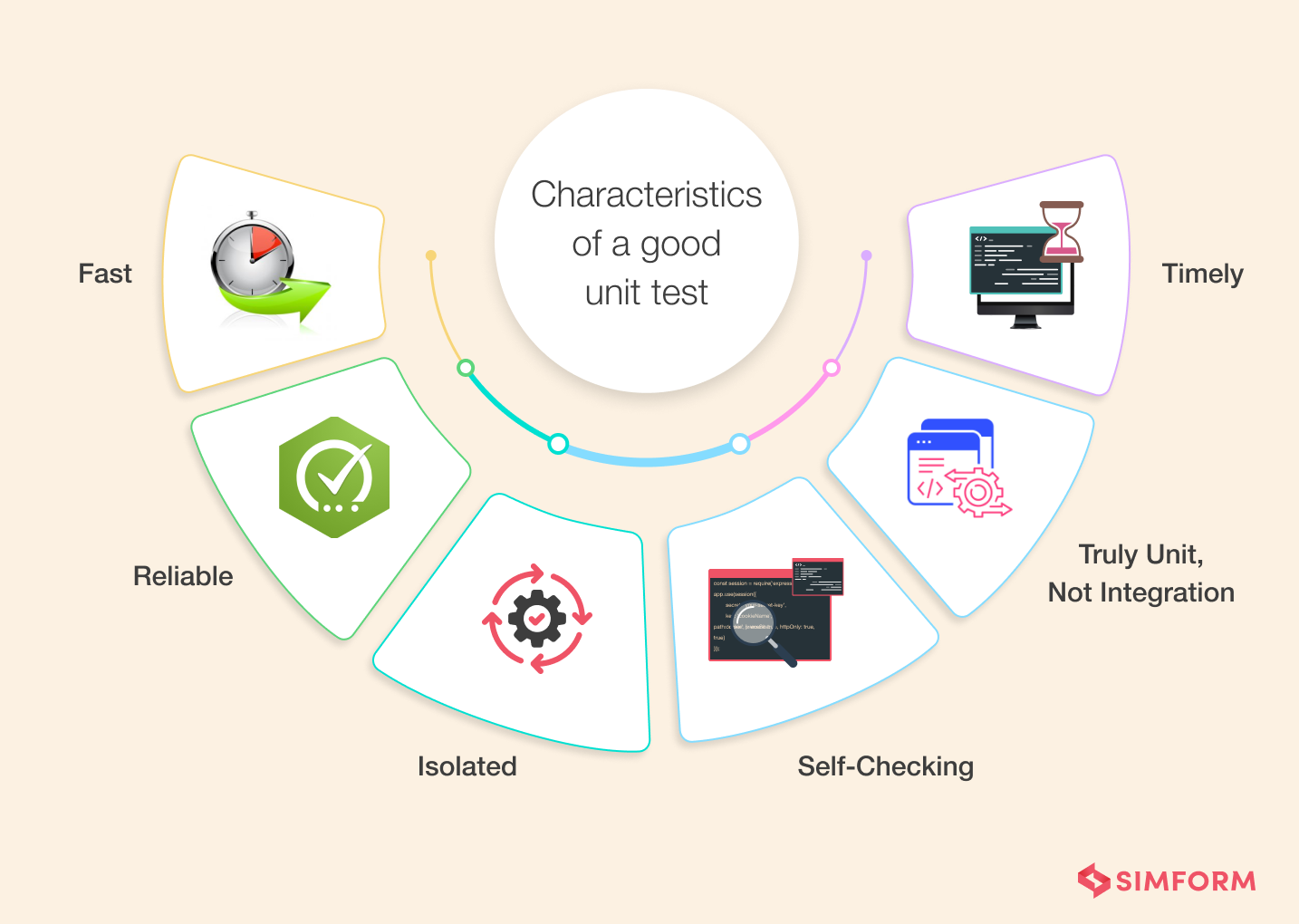

Before we look into software unit testing best practices, it is essential to understand what characterizes a good unit test. Following are the properties of a good unit test:

- Fast: Unit tests should run quickly since a project can have thousands of unit tests. Slow running unit tests can frustrate testers and take a long while to execute. Also, unit tests are run repeatedly, so developers might skip running them and deliver buggy code if they are slow.

- Reliable: Reliable unit tests only fail if there is a bug in the underlying code, which is pretty obvious. But at times, tests fail even if there are no bugs in the source code due to a design flaw. For instance, a test passes when run one by one, but it fails when executing the whole test class or on the CI server. Also, unit tests should be reusable and help avoid repetitive work.

- Isolated: Unit tests are run in isolation without any interdependencies and external dependencies (file system, database, etc.) or external environment factors.

- Self-checking: Unit tests should automatically determine if they passed or failed without any human intervention.

- Timely: If a unit test code takes longer to write than the time taken for writing the code being tested, consider a more viable design. A good unit test is easy to write and not tightly coupled.

- Truly unit, not integration: Unit tests can easily turn into integration tests if tested together with multiple other components. Unit tests are standalone and should not be influenced by external factors.

Simform, for one, has always maintained standard practices for software testing, including unit tests. Though the testing guidelines can be different from platform to platform and project to project, you can always stick to a general standard of best practices to unit test the product.

Check out this blog on how to optimise software testing costs

Unit Testing Best Practices

1. Write Readable Tests

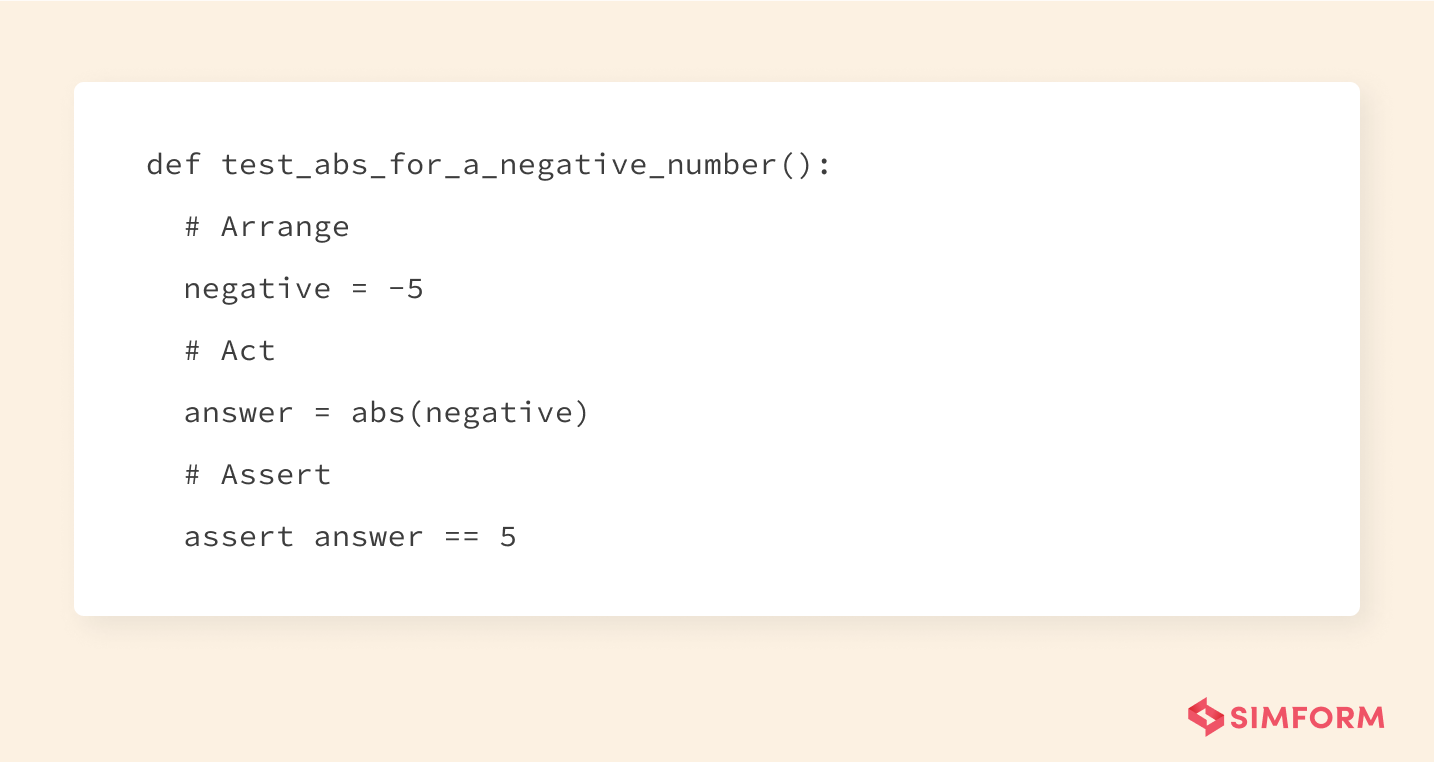

Easy-to-read tests are comforting to understand how your code works, its intent, and what went wrong when the test fails. Tests revealing the setup logic at first glance are more convenient for figuring out how to fix the problem without debugging the code. Such readability also improves the maintainability of tests since the production code changes are required to be updated in the tests also. Moreover, difficult-to-read tests create more misunderstandings among developers, resulting in more bugs. Following is an example of a perfectly readable unit test for Python’s absolute value function:

Also, unit tests naturally serve as documentation since they describe the behavioral aspect of the subject and validate it. So when you write clear and readable tests, you’re not only doing your future self a favor but also to other developers who are new on the team or are not even hired yet. It instantly familiarizes them with the code and entire systems without bothering anyone else.

Now, to answer how to write easy and enjoyable tests to read, here are some effective ways.

- Firstly, have a sound naming convention for every test case. Name tests in such a way that it instantly describe the subject, what scenario is being tested, and the expected result.

- Secondly, use Arrange, Act, Assert pattern to clearly define the test phases and enhance readability.

- Lastly, avoid using magic numbers or strings in the test cases, which takes us to our next tip.

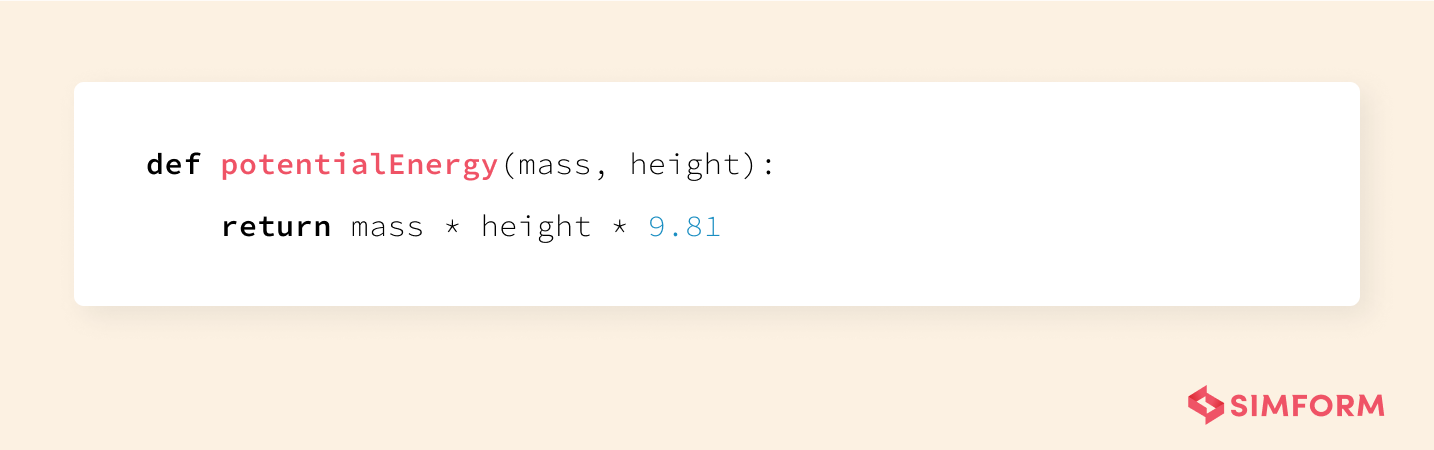

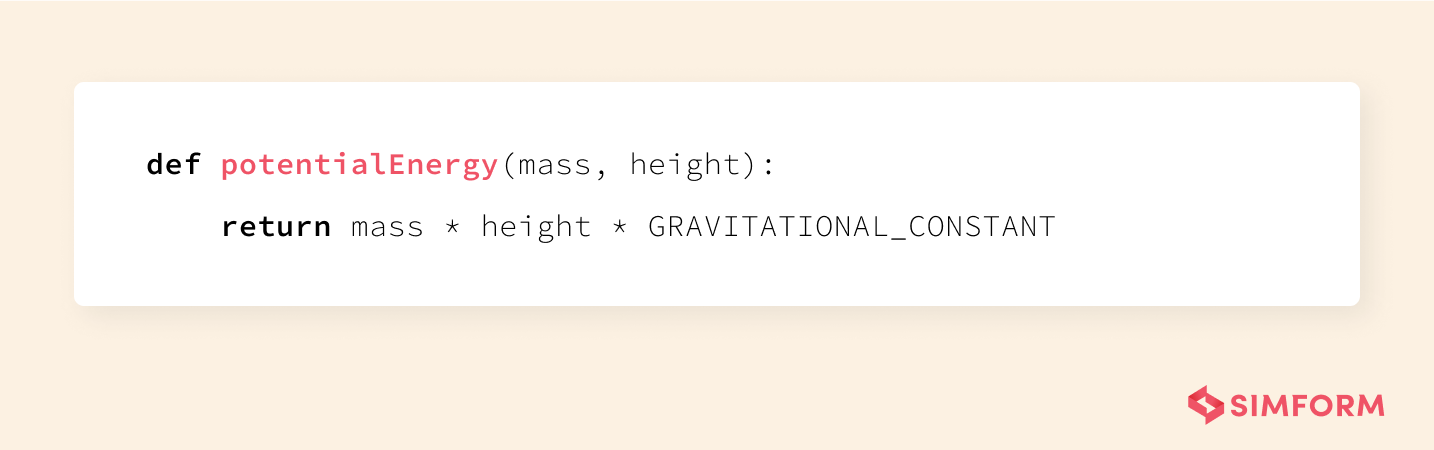

2. Avoid magic numbers and magic strings

The use of magic strings or numbers confuses readers since it makes the tests less readable. In addition, it diverts readers from looking at the implementation details and makes them wonder why a particular value has been chosen instead of focusing on the actual test. Here is an example code snippet with a magic number:

On the other hand, if a constant needs to be changed, changing it in one place updates all the other values. So, it is better to use variables or constants in the tests for assigning values. It would help you to express as much intent as possible while writing tests. Now, let’s replace the magic number with a constant that has a readable name and explains the meaning of the number.

3. Write Deterministic Tests

Deterministic tests either pass all the time or fail all the time until fixed. But they exhibit the same behavior every time they are run unless the code is changed. So a flaky test, aka a non-deterministic test that sometimes passes and sometimes fails, is as good as having no test at all.

For instance, you built a unit test for function calculateInterest(), and it passed. It should continue to pass until changes are made to calculateInterest(). Or if it fails, it should fail every time, even if it is run ten or a thousand times, until the error with calculateInterest() is fixed. If the test is flaky, developers don’t trust it and render it irrelevant as there is no definite indication of a bug in the code or any clear output.

To avoid non-deterministic tests, ensure that they are completely isolated and are not dependent on other test cases. You can fix flaky tests by controlling external dependencies and environmental values like the current time or language settings of the machine.

4. Avoid test interdependencies

Test runners generally run multiple unit tests at a time without sticking to any particular order, so interdependencies between tests make them unstable and difficult to execute and debug. You should ensure each test case has its own setup and teardown mechanism to avoid test interdependencies.

For example, suppose the test runner is running a few tests in a particular order for a while and a new test is added without its own setup. Now, if the test runner runs all the tests parallelly to reduce execution time, it’d disorient the whole test suite, and your tests will start failing.

Now the question is, how to write completely independent tests?

The first is, to not assume anything based on the order that you write test cases. If tests are coupled together, isolate the code into small groups/classes to be tested independently. Otherwise, changes in one unit can affect other units and cause the entire suite to fail.

5. Avoid logic in tests

Writing unit tests with logical conditions and manual strings concatenation increases the chances of bugs in your test suite. Tests should focus on the expected end result instead of the implementation details. Adding conditions such as if, while, switch, for, etc., can make the tests less deterministic and readable. If including logic in a test seems unavoidable, you can split the test into two or more different tests. For instance, take a look at the code below with logic.  Now, refer to the refactored code below without logic. Isn’t it clean and easier to read?

Now, refer to the refactored code below without logic. Isn’t it clean and easier to read?

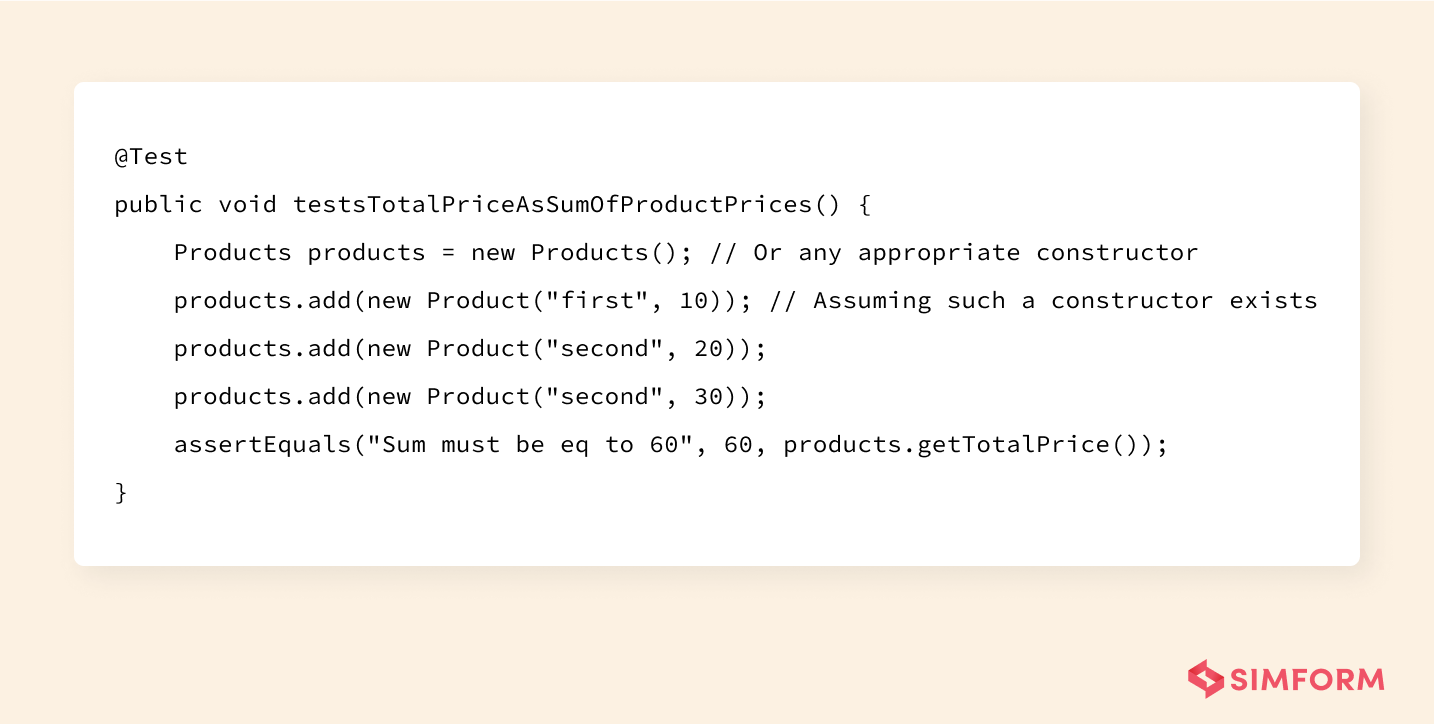

6. Refrain multiple asserts in a single unit test

For a unit test to be effective, keep one use case at a time, that is to have only one assertion in the tests. If you’re wondering what would happen if you include multiple asserts in a single test, let’s take a simple example.

Sometimes more than 10 asserts are included in one set in order to cover more features. Such cases result in going through all assertions to check the root cause of the problem, even if a single failure has occurred. Plus, the rest of the assertions never get checked if one assertion is failed, resulting in an unclear vision of the test being failed.

Although it seems tedious to write separate test scripts for each assertion, overall it saves more time, effort and is more definitive in the long run. You can also use parameterized tests as they enable you to run the same test multiple times with different values.

7. Keep your tests away from too much implementation details

Tests are difficult to maintain if they keep failing even for the slightest changes made to the implementation code. So the best bet is to keep implementation details at bay and save your time from rewriting the tests repeatedly. Thus, coupling tests with implementation details decrease the value of tests.

Unit tests are more resilient to change if they are not heavily paired with the production code’s internals. It also allows developers to refactor when required and provides valuable feedback with a safety net.

8. Write tests during development, not after it

Unit tests are at the base of the testing pyramid and are the earliest tests conducted in the development cycle. Therefore, they work best when they are run alongside the development and not after it.

Setting up unit tests as early as possible promotes writing clean code and identifying bugs early on. Writing tests at the end of development may result in non-testable code. On the contrary, writing tests parallel to the production code allows us to review both test code and production code together. It further helps the developers better understand the code. It also makes the process of unit testing more scalable and sustainable.

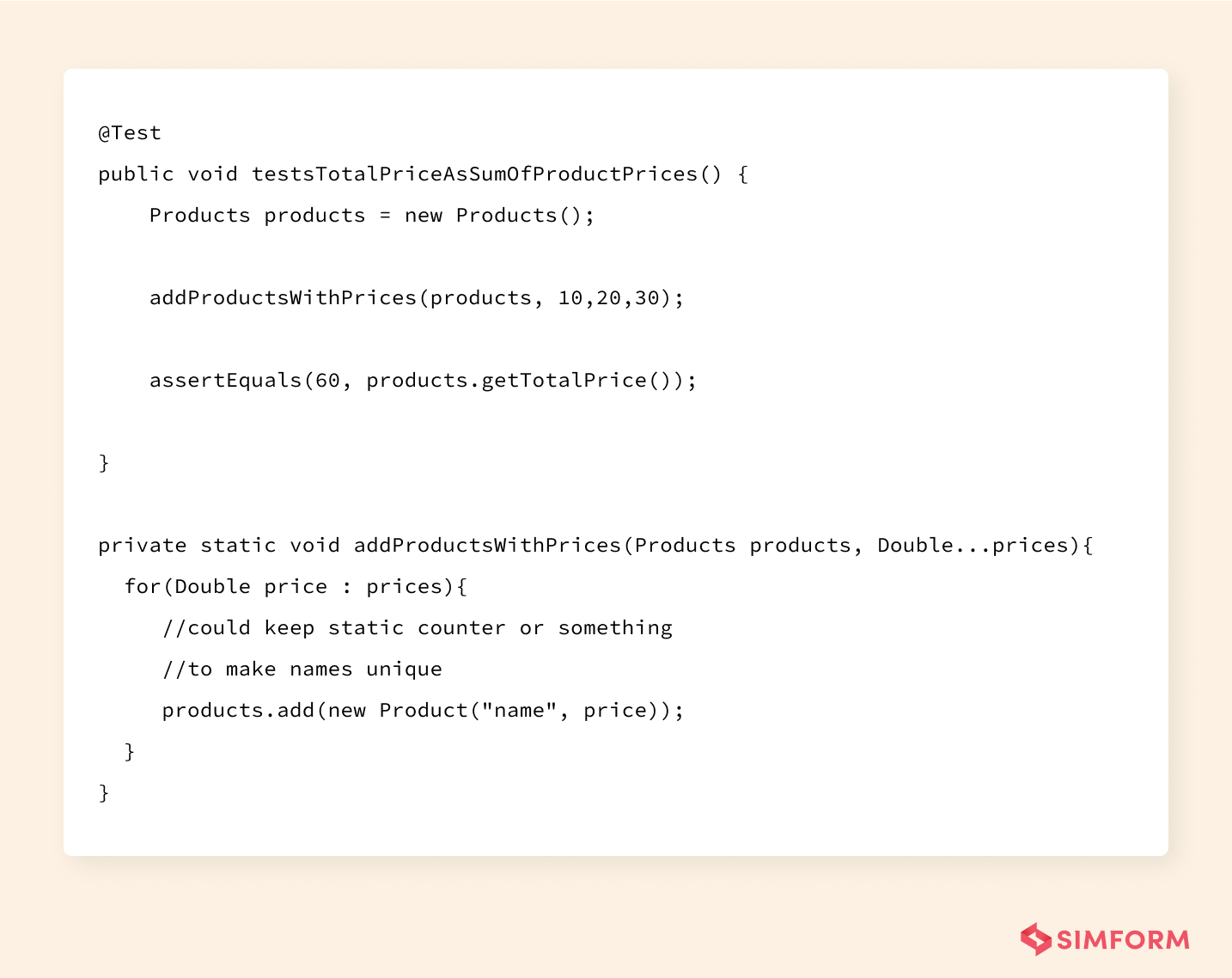

Some organizations prefer writing unit tests before the production code, which essentially becomes Test-driven development (TDD). This practice helps you mentally prepare for the expected behavior of the code. It raises questions and cases even before you start writing the code unlike asking them during the development process. TDD approach helps reduce time spent on reworks and debugging. It instantly tells you if the last refactoring broke the previously working code or not. Combined with unit testing, it can identify errors and problems quickly. To learn more, you can visit our blog on how to do Unit testing in Test-driven development (TDD).

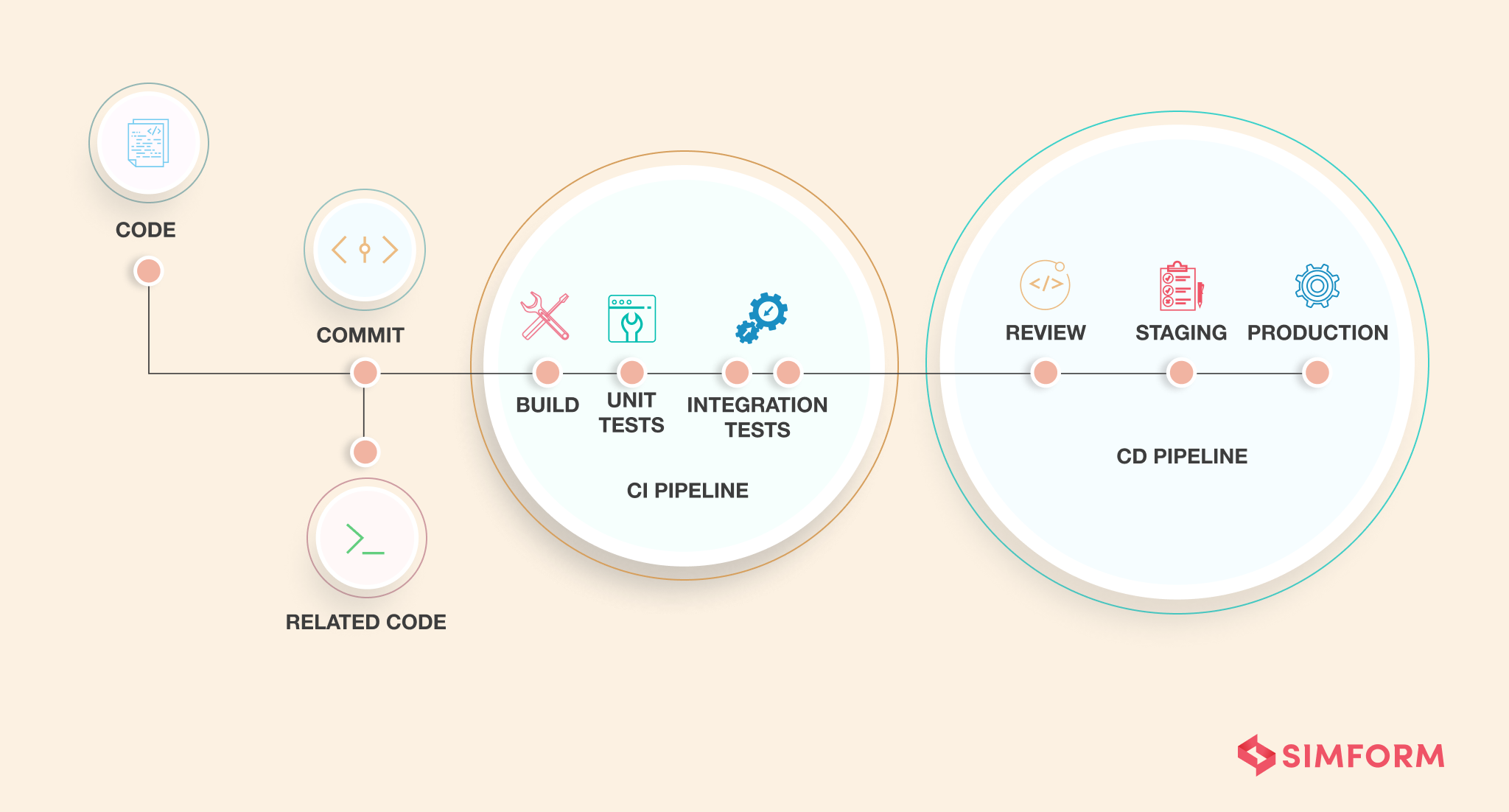

9. Automate tests using CI/CD tools

Automating the tests by including them in a CI/CD pipeline allows you to easily run tests multiple times in a day. It enables continuous testing and test execution on each code commit. Even if you forget to run a test, the CI (Continuous Integration) server won’t and will prevent passing on buggy code to the customers.

On the contrary, manual testing cannot run a sufficient number of tests rapidly, conveniently, and accurately enough. It becomes especially difficult with tight deadlines to roll out software.

Automated tests help in the early detection of bugs, give rapid feedback, and adds an extra layer of safety. In addition, it provides insights on code coverage, modified code coverage, how many tests are running, performance, etc., which enables in-depth analysis and developers to work efficiently.

10. Update the tests periodically

Unit tests are ideal for long-term projects since it helps new team members with detailed documentation by making the code and its behavior easier to understand. So maintaining and updating the tests periodically makes them ideal test suites for creating helpful documentation. Unit tests lacking this quality are less useful since it eventually slows down the work progress of your team.

What about Code Coverage?

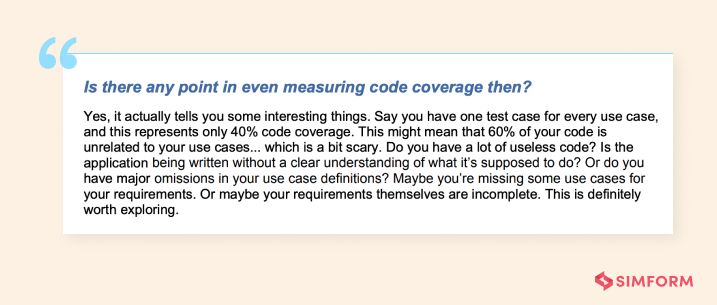

Code coverage measures how much of the code is actually executed by a test. It helps identify the untested parts of a codebase. So high code coverage is often used to indicate higher quality of code. Although it is not the sole measure of code quality, it is a useful tool. To state its importance, I would like to quote Adam Kolawa, co-founder and former CEO of Parasoft.

Unit testing can make it easier to get almost 100% code coverage since it breaks down the code into smallest, reusable and testable components. Automated unit tests can even help cover the entire codebase. A 100% code coverage might be difficult to achieve, so you need to be careful of spending too much effort on it.

However, there are a lot of contradictions on what percentage of code coverage one should aim for. A 100% code coverage might seem impossible initially, so even a goal of achieving 60-80% coverage is a good starting point. However, extremely low coverage numbers are a definite sign of weakness.

If you ask how to increase code coverage, using parameterized tests can be an effective way. You can also add more tests for more code paths or more use cases of the method that is under test.

The Bottom Line

The above-mentioned best practices aren’t the only ways to maximize your outcomes, but they’d surely ease your unit testing process, with automation being the key. Also, what’s the best way to do unit testing than using its frameworks available in various languages to simplify the process? Except for some advanced features that need to be hand-coded for complex requirements. Unit testing, for one, is focused, unique and you can not match its outcome with any other type of testing. So instead, adopt its continuous learning process and make the practice your friend to master perfection.

Let us know in the comment section what other best practices for unit testing you have been using in your teams or organization? Or drop us a line by email for detailed information on the subject.