Angular is, by default, a powerful and high performing front-end framework. Yet, unexpected challenges are bound to happen when you’re building mission-critical web apps, apps that are content-heavy and complex on the architectural side.

When a performance crisis hits hard, there is a common pattern of how PMs usually solve these problems. Browsing their way to a few conference talks and implementing every tip they come across. Does this solve all the issues? No! Because this is the wrong way to approach the problem. In other words, finding a solution and applying it to the problem that you’re yet to identify will lead you to a dead end.

Editor’s note: In this article, Hiren will help you systematically identify the bottlenecks and discuss comprehensive Angular performance optimization techniques, and if you’re looking for an agency to implement these optimization techniques, feel free to check out Simform’s custom web application development offerings.

Tips/Methods to Optimize Angular Performance

Check how we build a highly performant Angular App for the food truck industry.

Angular does a great job in terms of providing high-performing apps. But, if you want to develop a large scale app that deals with multiple heavy computations, you may start to see performance bottlenecks. Plus, these issues aren’t solved completely with basic quick fixes.

In this section, we’ve discussed some prominent performance issues in detail and a few ways to deal with them. However, it doesn’t translate that you’ve to apply each of these solutions, but, knowing the few basic hacks can alleviate your web app performance significantly.

Want to develop a web app that performs well?

1. Leverage Efficient Change Detection Strategies

Change detection in Angular is a powerful mechanism that can help you optimize the performance metrics. Yet, time and again, it has required modification since one solution doesn’t solve all the problems.

Change detection detects new entries and updates. It reflects the component data changes and automatically re-renders the application to reflect those changes in the view.

However, when it comes to large scale complex applications, change detection can come off as a challenge, for the frequency of the detection of changes hinder the browser thread.

For instance, it consistently goes to the main app component to check and verify the changes in the sub-components.

In such cases, we can optimize the process by applying these three explicit change detection strategies, OnPush & Immutability, Pipes instead of methods, and Detach change detection.

Now, these methods are not going to alter the purpose of change detection but filter the actions made by change detection. Let’s take a brief look at them:

OnPush:

It bifurcates actions for applying change detection. For example, with default change detection in Angular applications, the rendering happens for the entire tree starting from the root component to the smallest subtree. It slows down the process that results in longer waiting times and bad user experience.

OnPush selects the specific branch to go under change detection. It does not trigger rendering of root components or other subtrees. Whenever it is not required to check the changes in one of the component trees, they can be skipped. For example, for any two-component trees such as entries for prime numbers and non-prime numbers, you can check the value entered only for the prime numbers component tree.

It is not necessary to check the sub component’s tree for sole purposes operations, which in this case are entering prime numbers value. So, in the cases where it is required to skip the sub-component trees, OnPush serves as the best way to use change detection.

Immutability:

As we have described in the above discussion, applying OnPush does the good job for minimizing the change detection. But there are some cases where that too, requires a bit of optimization. Lets see how.

Angular can check for complexities to recalculate values in some cases. You can use immutability of objects to reduce these complexity. Immutability triggers the change detection to render the entire DOM, whereas mutable objects do not return a new reference when values are changed.

Hence, mutable objects do not render the DOM and stop the triggering of change detection. However, if you want some rendering to occur for detecting the changes clearly, you can still use immutable.js data types for an instance List instead of Array.

Detach change detection

For heavy computations, it is better to skip change detection this powerful tool since it increases the application load by instantiating a lot of components. When events are too much to handle for heavy apps, it becomes an expensive approach to manage. Even after applying OnPush, the rendering can be increasingly difficult for the child elements in some applications.

You can minimize the change detection by detaching it when the data is set to boost the turnaround time to perform actions. It cuts down the change taking place deep down in the tree, limiting the recheck to the given component only.

Using Pipes instead of methods

Change detection can be best optimized using the Angular pipes mechanism. It has two types: Pure pipes and impure pipes.

Pure pipes reduce the recalculating of values, complicated numbers, and further distills the expected results. It only returns values when the input is different from the previous invocations. Hence, you can minimize the use of functions or methods in the template by replacing it with pipes instead because a pipe would be called only when input values change while a function or a method would be called on every change detection.

2. Use Web Workers to Ensure Non-Blocking User Interface

Processes like encryptions of data and resizing of images involve the main thread, which on the other hand, freezes the user interface. In such cases, the users find it annoying to use the application. Web workers put these complex processes into a separate thread to avoid the involvement of the main thread in the explicit background processes and maintain an effortless operation of the user interface.

Here are some of the types of use case /apps for using web workers:

- Complex calculations

- Real-time content formatting

- Progressive web app

- Extensive data update on a database

- Image filtering

3. Minimize Additional Checks by Enabling ProdMode

4. Optimize Events for Faster DOMs

Optimizations of Events are responsible for avoiding unnecessary loading and server requests. Minifying the business logic of events results in faster working DOMs. It is observed that slower DOM results in delayed processing of click events and so delivering poor user experience.

In the worst cases with no or minimal optimizations, the components take more time to service click events and are dependent on other workers to perform it. In these cases, the change detection does not complete until the controller returns from the task. So, you can optimize the events by altering the business logic that requires the fewest strings possible to depend and the shortest way possible.

5.Optimize DOM Manipulation for Better Performance

DOM manipulation is responsible for an application’s speed and performance. It uses ngFor directive to manage an array of iterable objects. ngFor is a structural directive and used for iterative operations.

Let’s say an application is required to add ten usernames where each time it iterates the entire DOM for adding a new user. This whole process is not feasible for the projects requiring 10,000 or more entries. Even for lesser entries as 100, the speed and performance of the application will be drowned.

The iteration of the entire DOM impacts negatively on network performance (requiring the browser to recompute the positioning and styling of nodes, shipping lots of unused bytes), runtime performance, and other aspects. All these results in slowing down the page response.

The best solution to this is using trackBy, which puts an end to this unnecessary creation or destruction of DOM by bifurcating the items with unique identifiers. It makes the process easy by manipulating the DOM for the items that are changed by limiting the creation and destruction of DOM only for the items that are changed.

Our Angular Developers build Angular Apps that perform well

6. Implement Code-Splitting and Lazy Loading for Faster Load Time Time

The loading time of an app is one of the most important performance benchmarks in Angular as it directly affects the business side of an application. It shows how your application behaves after a user clicks or performs an action while interacting with the application modules. If the application is taking more than usual time in reflecting on the user actions, it represents poor load time performance.

Let’s discuss several ways to optimize load time performance in Angular applications.

Code Splitting for Improved Time To Interactive (TTI)

Web applications are multipurpose services, and Javascript makes these applications extremely viable with its modern functionalities. Needless to say, the large amount of Javascript file sizes can impact poorly on the interactivity. Code Splitting breaks the Javascript bundles without compromising on the features. It keeps significant control over the Javascript being presented to the users at the time of initial load time.

Code splitting makes a website fully interactive and ready-to-performing well under the TTI measure (you can check how well TTI does in the open-source tool Lighthouse provided by Chrome Dev Tools for improving web quality). Code Splitting can be performed at different levels, such as entry points, dynamic imports, and preventing duplicatoon.

Lazy Loading for Better Efficiency of Program’s Operation

Building a large scale application involves meticulous details that should not be ignored. These applications usually contain a large number of feature modules. However, all these feature modules are not required to be loaded all at once.

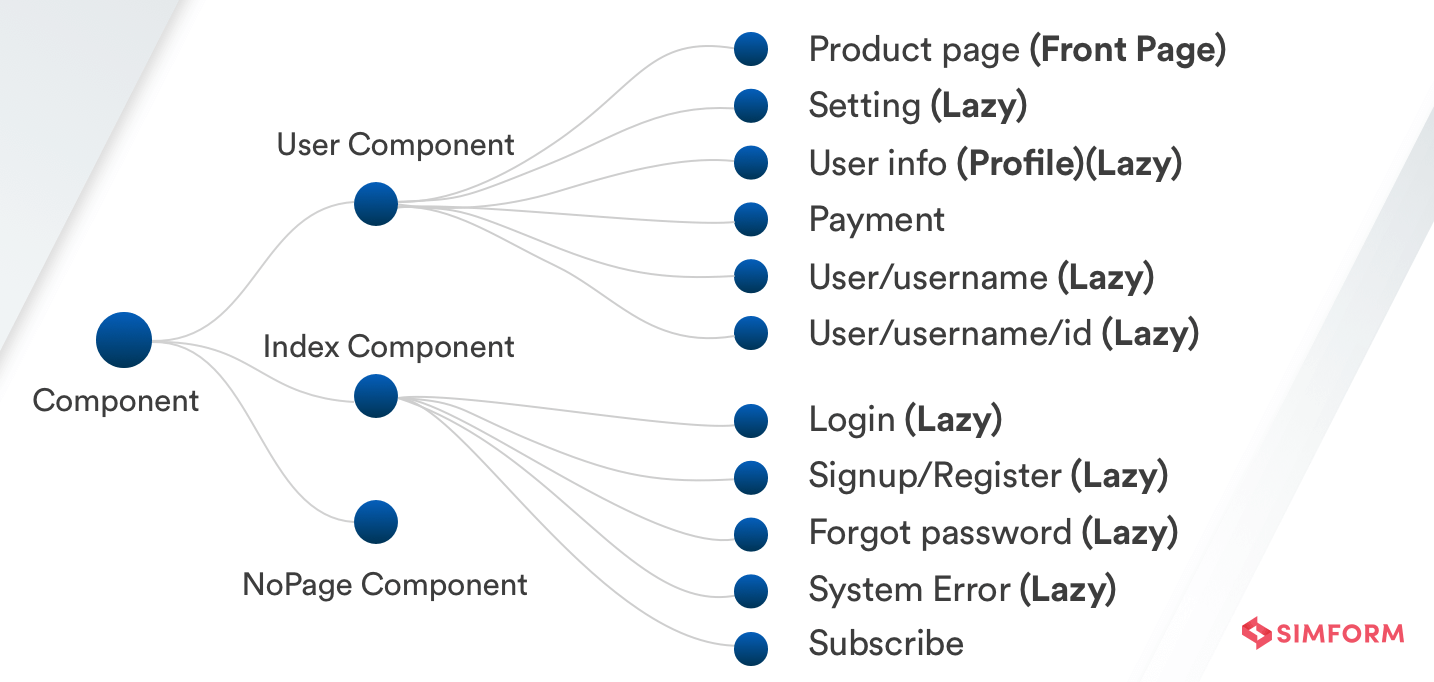

Loading only the necessary Angular modules at the initial load not only reduces the bundle size but also decreases load time. This design pattern is called lazy loading, and, as said, it loads the app modules only when it is necessary. Ideally, for an application to be successful, the initial load time should be short. For that, it is recommended to lazy load the UI components that are not necessary at first.

Building a large scale application involves meticulous details that should not be ignored. These applications usually contain a large number of feature modules. However, all these feature modules are not required to be loaded all at once.

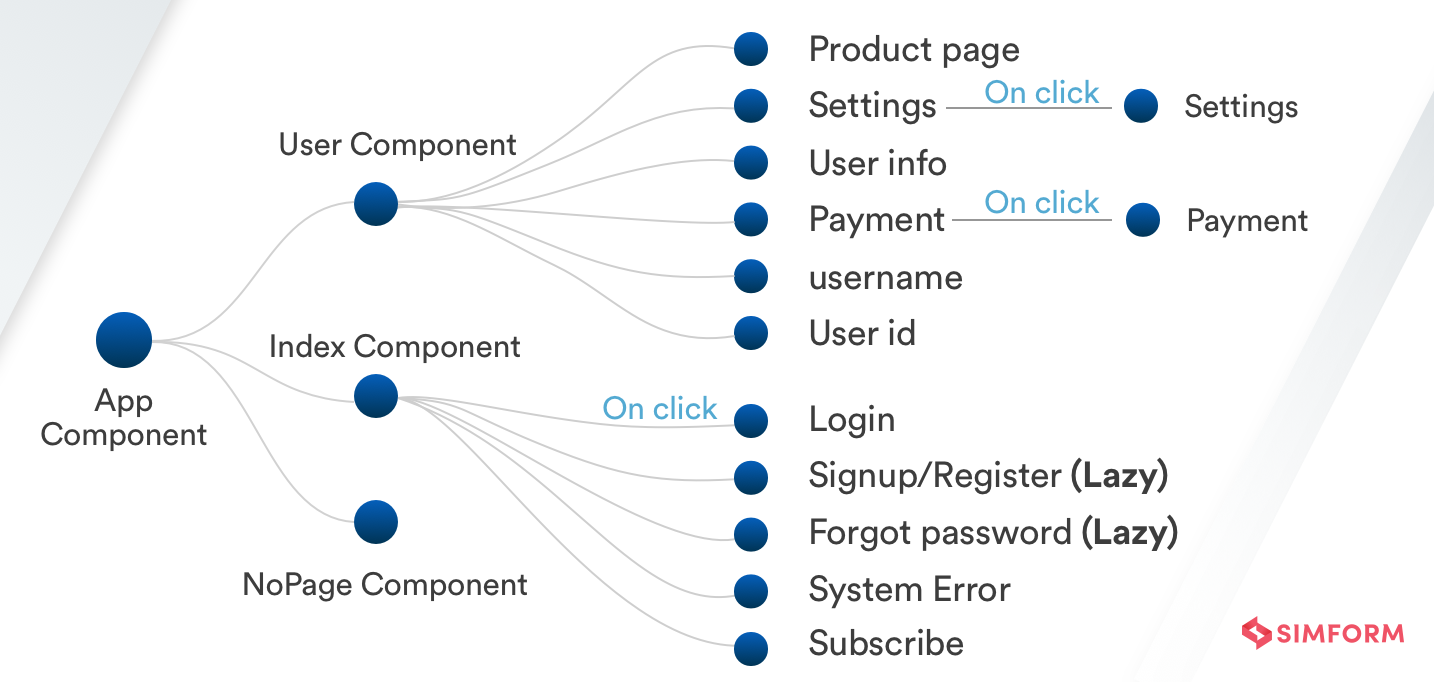

Loading only the necessary modules at the initial load not only reduces the bundle size but also decreases load time. This design pattern is called lazy loading, and, as said, it loads the app modules only when it is necessary. Ideally, for an application to be successful, the initial load time should be short. For that, it is recommended to lazy load the components that are not necessary at first.

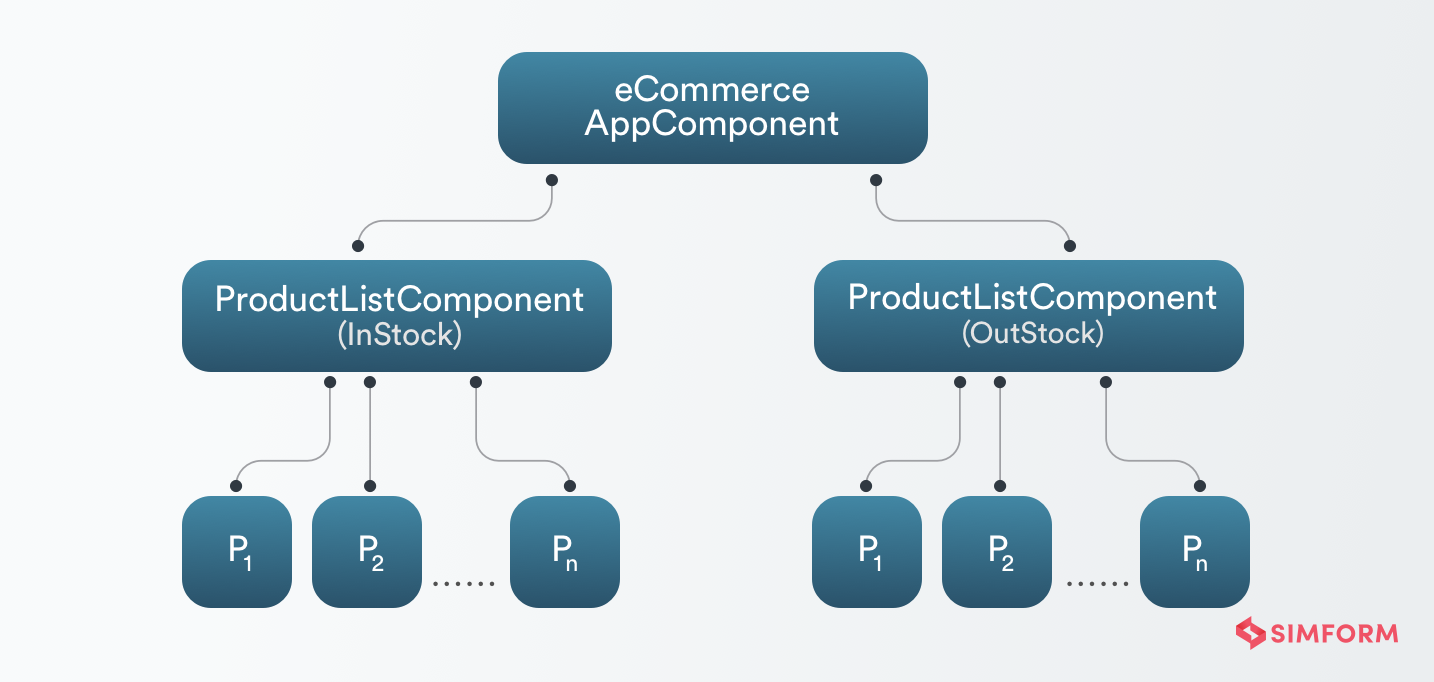

Let’s take an example of an eCommerce app. It shows the demonstration of the components that are to be lazy-loaded and released with the time. It sharpens the app performance magnificently by saving the website from the lousy waiting times.

The modules are lazy loaded before release:

The modules are released onclicks:

Lazy loading makes significant performance improvement, such as:

- Decreased size of code bundles

- Classification of modules according to the functionalities

- Respective navigation of routes for downloading the code modules

7. Save Your Build from Memory Leak

Negligence of minor aspects in web app development can lead to significant setbacks—for instance, memory leaks. Memory leaks occur when your application fails to eliminate the resources that are not being used. When the application’s memory is consumed more and more without any addition of new files (texts, images, etc.), then the application is likely to face major performance degradation.

The global declaration of variables creates unnecessary memory leaks, usually in cases when developers do not unsubscribe observables or declare extraneous global variables (for instance, when the window or tab is open but unused). These entities may likely remain unused and so, should be immediately unsubscribed or deleted to improve Angular performance.

An open stream is started when we subscribe to an observable, and it doesn’t close until we use the unsubscribe method. Similar to declaring global variables, failing to unsubscribe from observables might cause unwanted memory leaks. In short, keep in mind to correctly unsubscribe from observables when using them in your code to avoid memory leaks.

8. Remove Unused Code Using Tree-Shaking

Tree-shaking is a dead-code elimination technique used for optimizing codes in Javascript. You can use Tree-shaking for removing the unwanted code. It will help you in getting the smaller build size as much as possible. However, it is, by default, enabled when you use Angular CLI. It removes the unused modules at the build time, so it can also be referred to as dead code elimination.

9. Preload & Prefetch for Instant Engagement

Preload is used when you want to load the initial content of a web page, at the time of loading a website. Prefetch is used for loading the required content once the website is already loaded on the browser. These attributes are used for loading static resources. It is important to attend the users waiting for the site to load with some instant content instead of a blank page. These attributes do the nice job of loading the essential content as quickly as possible.

10. Remove Third Party Packages for Smaller Build

11. Ensure Server-Side Rendering Using Angular Universal

Considering the fact that 53% of users don’t wait for the page taking more than 3 seconds to load, server-side rendering is crucial for web development. Angular universal is a process of rendering an application to HTML on a server instead of rendering on a browser, which is the case of typical Angular applications.

In typical scenarios, when a user sends a request, the browser first loads the blank page. Server rendering overcomes that concept by instantly displaying some useful information to the users.

We often see in social media posts with a little summary based cards.

It contains information about the blog, small image, and reference summary. Social media crawlers do not fetch the information from the dynamically loaded pages by Javascript; instead, fetch the information from the server-side rendered application. It is the same process with most search engines except Google since Google indexes the Javascript-based pages. For applications to be indexed and ranked correctly over most search engines, server-side rendered optimization is a must. server-side rendered optimization is a must.

12. Cache Static Content for Lightweight UI Experience

Progressive web apps are ideal examples of caching static content. They are a massive success because of the static caching of the javascript files, assets, and CSS files.

For many eCommerce applications in the market, users can’t install each different eCommerce app. For always enjoying different eCommerce platforms and following an easy approach, it is possible to keep a shortcut on the homescreen and still get native-like experience.

13. Optimize User Interface with Fast-Performing Template

The template expressions provided by Angular are an effective tool for modifying data coming from the backend, but they must be used carefully to prevent sluggish user interfaces. Functions used in the template must work fast in order for other code to run. A function that takes too long to finish may provide users with a slow and laggy UI experience. However, you can avoid this by performing complex computations in the component file before the template is rendered. As a result, you can ensure a quick, seamless and a smoother user experience while also leveraging the template expressions in Angular.

14. Use AoT Compilation for Faster Rendering Speeds

JIT (Just-in-time) and AoT (Ahead-of-Time) are the two compilation types that Angular offers . While JIT compilation was the sole choice available in the past, Angular introduced AoT compilation with the release of version 8, which considerably boosts performance.

The JIT compilation bundles the compiler with itself, increasing the bundler’s size. It also lengthens the component’s rendering time. AoT compilation, on the other hand, compiles the program as it is being built, producing only the compiled templates and leaving the compiler out of the bundle. This leads to substantially quicker rendering speed and reduced bundle size.

15. Prevent Speed Degradation with Resolve Guard

One must handle mistakes effectively to prevent speed degradation when loading components. It’s critical to handle any potential mistakes gracefully when we need to load a new component that displays a list of users from the already loaded component. When an error occurs while receiving the list of users from the server, the existing loaded component is destroyed, a new component is loaded, an HTTP call is issued, the new component is deleted, the previous component is loaded once more, and the error is displayed.

Each of these round trips might be time-consuming, which slows down performance. To deal with this issue, you can employ a Resolve Guard and keep the performance from being affected much. The next component will only load if the necessary HTTP call is made within the Resolve Guard and produces a successful result. If there is a problem, the already loaded component will show the error instead of loading the next component. In short, you can improve the performance of your Angular application by employing a Resolve Guard to significantly reduce DOM rendering and destruction time.

Conclusion

The earlier versions of Angular didn’t rank high on the performance metrics. However, with the release of Angular 9, many performance optimization problems are sorted because of the framework’s capability of dealing with them.

Angular expert “Michael” tweeted about Angular 9 back in 2018:

Angular #ivy is using classical programming concepts and patterns to move the framework forward. This is why I love #angular, engineering we can predict instead of hacks and conventions.

Speaking from our experience of the Angular team at Simform, it has decreased as much as 35% of build size in the Angular 9 version, and that is quite significant. What’s more, Google’s light house shows 99% in performance metrics of Angular 9 against 95% of Angular 8 according to average measures.

The future holds a sharp balance between the clean code and performance that makes your end-users’ and you happy. If you need help implementing these techniques, clarifications, or something is wrong, feel free to connect with me on Twitter or drop me a line at hiren@simformlabs.com.